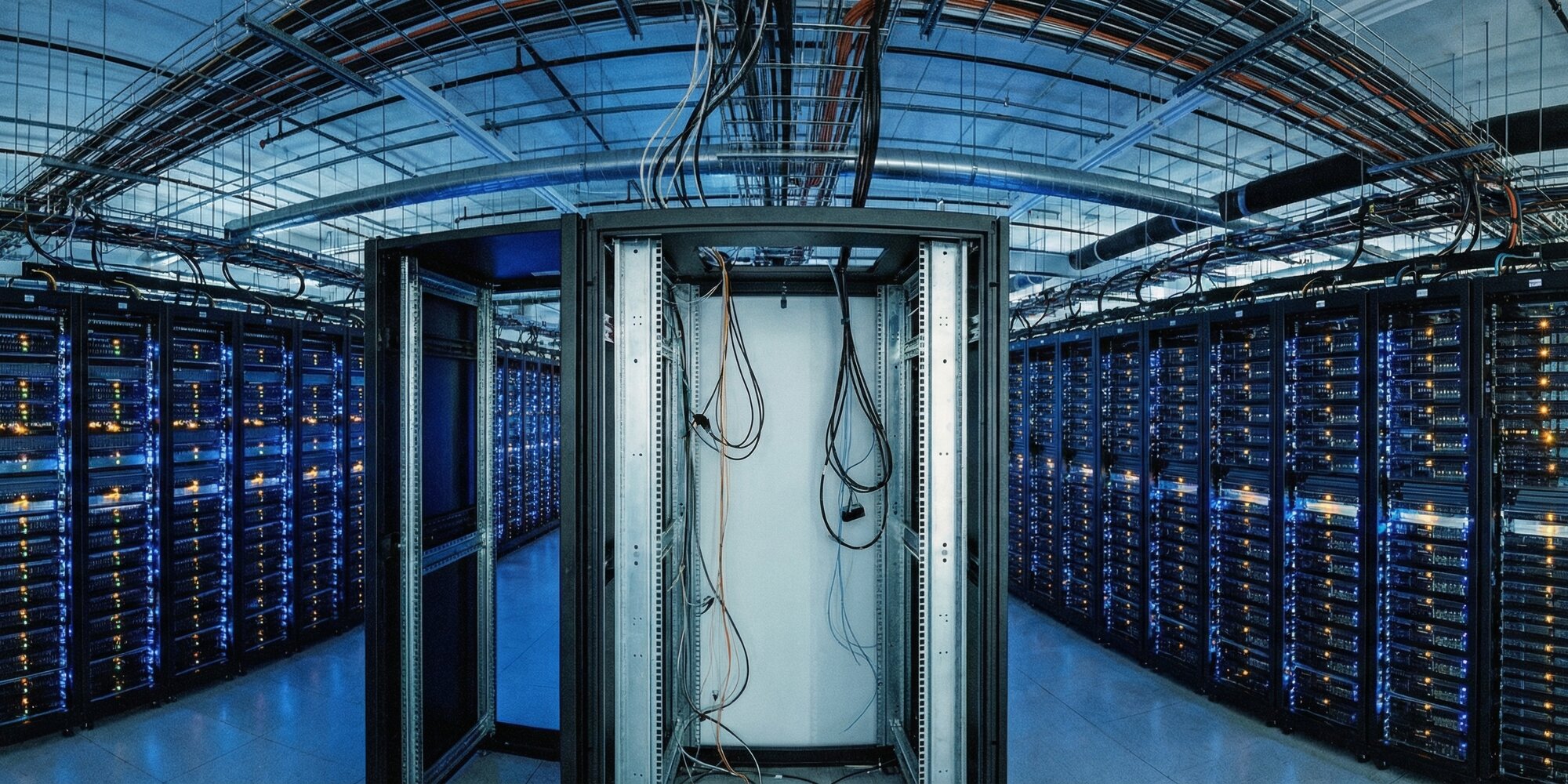

The most consequential data center announcement from NVIDIA's GTC 2026 conference in San Jose wasn't a chip — it was a building spec. The Vera Rubin POD, unveiled by CEO Jensen Huang this week, is a 40-rack, 60-exaflop AI supercomputer system that doesn't ask data center operators to upgrade their hardware. It asks them to redesign their buildings. With 4,000-pound racks, 45°C hot water cooling, cable-free architecture, and a peak throughput of 700 million tokens per second from a single rack — more than a prior-generation gigawatt data center could muster — NVIDIA is no longer just supplying the chips that power the AI industry. It is dictating what the facilities holding those chips must look like.

The Unit of Compute Is No Longer a Chip

For years, Jensen Huang's signature GTC move was the theatrical reveal: pull a GPU wafer from his leather jacket, hold it up, let the crowd respond. One chip. Hero object. Great theater. At GTC 2026, that era is over — not because the chips got worse, but because a chip is no longer the unit of compute.

"The unit of compute today is the rack," Huang told a capacity crowd at the SAP Center. The statement was as much an architectural declaration as a product announcement. The Vera Rubin NVL72 — the core compute engine of the POD — integrates 72 Rubin GPUs, 36 custom Vera CPUs, ConnectX-9 SuperNICs, and BlueField-4 DPUs across 18 compute trays and 9 NVLink switch trays. The rack packs roughly 1.3 million individual components and approximately 1,300 chips. It weighs close to 4,000 pounds.

That weight figure is not incidental. It is one of the first things data center operators will need to check against their structural engineering plans. Most enterprise data centers are designed for floor loads of 150 to 250 pounds per square foot. A 4,000-pound rack changes that calculation, and a 40-rack POD compounds it significantly. Colocation facilities optimized for lower-density compute are not the natural home of the Vera Rubin POD.

700 Million Tokens Per Rack: The Infrastructure Math

The performance numbers are the reason operators will absorb those engineering constraints. According to data reviewed at GTC, a single Vera Rubin NVL72 rack delivers a peak output of 700 million tokens per second. The previous generation of NVIDIA hardware — Blackwell — delivered 22 million tokens per second from a full 1-gigawatt data center. In the same power envelope, Vera Rubin delivers 32 times more. Across the efficiency curve, NVIDIA is claiming a 350x improvement in tokens per watt over a two-year span — against roughly 1.5x expected from Moore's Law over the same period.

The official NVIDIA figures peg the NVL72 at 4x better training performance and 10x better inference performance per watt relative to Blackwell, along with one-tenth the token cost. For hyperscalers running inference at scale — serving hundreds of millions of users daily — those economics are not marginal improvements. They represent a fundamental change in the cost structure of AI delivery. Satya Nadella confirmed during the conference that the first Vera Rubin rack is already deployed and running inside Microsoft Azure.

Token consumption globally now exceeds 10 quadrillion tokens per year and is growing as agentic AI workloads — in which AI systems call tools, run code, and orchestrate multi-step workflows with other AI agents — dramatically expand the volume of tokens generated machine-to-machine rather than human-to-machine. The Vera Rubin POD was designed with that trajectory in mind.

Hot Water Cooling and the Facility Redesign

The thermal architecture of the Vera Rubin NVL72 is where the data center implications get structural. The rack runs on 45°C hot water cooling — a design that moves thermal management directly into the rack rather than relying on the facility's chilled-air systems. The implications cascade through every layer of data center design.

Traditional high-density data centers are built around precision air conditioning: raised floor plenums, Computer Room Air Handlers (CRAHs), hot-aisle/cold-aisle containment systems, and often significant overhead in cooling capacity. That infrastructure is expensive, space-consuming, and inherently lossy — cold air absorbs heat inefficiently compared to liquid, and the energy overhead of moving large volumes of chilled air is substantial.

The hot water cooling approach in the NVL72 rack eliminates much of that. The rack itself becomes the primary thermal exchange point, using water circulated at 45°C — warm enough to enable free cooling via cooling towers or direct exchange in many climates, rather than energy-intensive mechanical refrigeration. Facilities that invest in the hydronic infrastructure to support this architecture can reclaim significant floor space and reduce cooling-related power overhead.

The tradeoff is upfront investment. Data centers not already plumbed for liquid cooling will need to retrofit or rebuild those systems. For greenfield builds targeting Vera Rubin deployments, designing around liquid cooling from the ground up is straightforward. For existing colo operators trying to accommodate POD-equipped tenants, the retrofit calculus is more complex.

Cable-Free Assembly: What 5-Minute Tray Installation Means

One of the more underappreciated GTC announcements concerns not performance but serviceability. The Vera Rubin NVL72 uses a PCB midplane to replace the traditional cabling that connects compute trays, NVLink switch trays, and power infrastructure. The result is a rack that is, in Huang's description, completely cable-free, hose-free, and fanless at the tray level.

Assembly time for a single compute tray dropped from nearly two hours to five minutes. Rack installation time dropped from two days to two hours. Those numbers matter enormously in high-scale deployments where operators are standing up dozens or hundreds of racks simultaneously — and where a failed component previously meant hours of service time.

The POD's copper cable infrastructure is extensive — 5,000 copper cables spanning more than two miles run through the full 40-rack system — but that complexity is engineered and contained within the rack and spine architecture, rather than being something operators manage by hand. All compute trays, NVLink switch trays, and power and cooling components are modular and hot-swappable, designed for in-field replacement without draining racks or interrupting active workloads.

The third-generation MGX rack architecture underpins all five rack types in the POD, sharing common power, cooling, and mechanical envelopes. Across more than 80 ecosystem partners, this means components, cooling hardware, and power distribution units are standardized and interchangeable — a meaningful operational simplification for operators managing large fleets.

The Groq 3 LPX Rack: A Dedicated Inference Pipeline

The most technically novel addition to the Vera Rubin POD is the NVIDIA Groq 3 LPX rack, which represents the first time NVIDIA has offered dedicated inference-optimized hardware as part of its data center platform. The rack contains 256 Language Processing Units (LPUs) per rack — technology NVIDIA licensed from Groq in a deal reportedly valued at $20 billion in late 2025.

The engineering rationale is specific to how large language models generate output. Inference splits into two distinct phases: prefill, where the user's input is transformed into tokens and the model does its "thinking" — a compute-intensive, highly parallelizable operation that GPU clusters handle well — and decode, where the model generates tokens sequentially, one at a time, in a latency-sensitive operation that GPUs are not optimized for. The Groq LPU was purpose-built for decode: 500MB of on-chip SRAM accessible at extremely low latency, with the decode path statically compiled at model load time to eliminate scheduling overhead.

The combination of NVL72 and Groq 3 LPX — orchestrated by NVIDIA's Dynamo inference software, which disaggregates the pipeline by routing prefill to GPUs and decode to LPUs — delivers up to 35x more tokens and up to 10x more revenue opportunity for trillion-parameter models relative to Blackwell. The Groq 3 LPX racks can operate as a single inference engine across multiple units, connected by direct chip-to-chip copper spines.

For real-time agentic applications — where AI agents must respond rapidly to each other and to users — this low-latency inference capacity is increasingly the limiting factor in system performance, not GPU compute. The Groq 3 LPX addresses that bottleneck directly.

The Vera CPU Rack and Agentic Scale

The Vera CPU rack is the third major component of the POD and addresses a constraint that the GPU-centric data center narrative has largely overlooked: agentic AI workflows generate enormous CPU demand. When AI agents call APIs, execute code in sandboxed environments, run SQL queries, and compile results for validation before passing them back to GPU-accelerated systems, the bottleneck is often not GPU compute but CPU availability.

The Vera CPU rack houses 256 liquid-cooled Vera processors alongside 64 BlueField-4 DPUs, providing more than 22,500 cores and 400 terabytes of memory. NVIDIA claims the rack can sustain over 22,500 concurrent reinforcement learning or agent sandbox environments simultaneously. The Vera processor itself uses 88 custom Olympus Arm cores, LPDDR5X memory with up to 1.2 terabytes per second of bandwidth, and NVLink Chip-to-Chip (C2C) interconnects for direct attachment to Rubin GPUs — enabling the CPU and GPU to share memory space without the latency overhead of conventional PCIe connections.

Jensen Huang's framing of standalone CPU sales as "already for sure going to be a multi-billion-dollar business" reflects a recognition that the infrastructure requirements of agentic AI are broader than the GPU cluster paradigm has accommodated. The Vera CPU rack is the architectural answer to that gap.

Scaling Up: Rubin Ultra, Kyber, and the Path to Exascale

Above the NVL72, NVIDIA introduced two additional scaling tiers that define the trajectory for hyperscaler deployments. The Vera Rubin Ultra NVL576 links eight NVL72 racks — each equipped with 72 next-generation Rubin Ultra GPUs — into a single 576-GPU NVLink domain via copper and direct optical connections. A single Rubin Ultra chip reportedly delivers 100 petaflops in the FP4 data format, with four compute dies each exceeding 800 square millimeters paired with 16 HBM4e memory stacks totaling one terabyte of capacity.

The Kyber rack introduces a vertical compute design that doubles the NVLink domain per rack to 144 GPUs, using layered horizontal boards — compute hardware at the front, a midplane behind it, and an NVLink backplane at the rear — to radically simplify installation. Eight Kyber racks form the NVL1152 configuration at 1,152 GPUs. Huang described Kyber explicitly as the architectural foundation for NVIDIA's next-generation Feynman platform, expected in 2028.

For context on what a complete Vera Rubin POD represents at the full 40-rack scale: 1.2 quadrillion transistors, nearly 20,000 NVIDIA dies, 1,152 Rubin GPUs, 60 exaflops of compute, and 10 petabytes per second of scale-up bandwidth. That is not a server cluster. It is a pre-configured national-class supercomputer delivered as a product.

What Data Center Operators Need to Plan For

The Vera Rubin POD is not a product data center operators can accommodate with a firmware update or a new cabling vendor. It is a facilities transformation. The checklist for operators considering POD deployments spans structural, mechanical, and electrical engineering:

Floor load: 4,000-pound racks require reinforced structural support. Facilities engineers will need to verify floor load ratings against the per-rack and per-pod aggregate weight, particularly in existing buildings not designed for this density.

Liquid cooling infrastructure: Hydronic systems capable of circulating water at 45°C must be installed or retrofitted. Greenfield builds should be designed around this from the start. Colo operators accommodating POD tenants will need dedicated liquid cooling loops, not just supplemental in-row cooling units.

Power density: The NVL72's performance improvements are partly offset by increased per-rack power draw at these densities. Electrical infrastructure — switchgear, UPS systems, PDUs — must be sized accordingly.

Physical footprint: 40 racks requires dedicated floor area in contiguous, accessible space. The scale-out configurations — NVL576, NVL1152 — compound this. Facilities without large contiguous floor plates will face deployment constraints.

The first Vera Rubin systems are already shipping to hyperscalers. The broader availability for enterprise and colo deployment is targeted for the second half of 2026. Operators with the infrastructure — or the capital to build it — are positioned for a significant competitive window. Those without are facing a facilities decision that cannot be deferred indefinitely.

As NVIDIA puts it: the AI factory is no longer a metaphor. The Vera Rubin POD is the floor plan.