Broadcom just posted numbers that would have seemed implausible eighteen months ago: $8.4 billion in AI chip revenue in a single quarter, up 106% year-over-year. On Wednesday's earnings call, CEO Hock Tan looked beyond the record results and made a projection that sets the company apart from every other semiconductor firm in the industry — he said Broadcom has "line of sight" to $100 billion in AI chip revenue in 2027, and that the supply chain to support it is already in place.

The Numbers That Stopped the Room

Broadcom's fiscal first quarter 2026, which ended February 1, was built on a single engine: AI. Total consolidated revenue hit $19.3 billion, up 29% from the same period a year earlier — itself a record at the time. GAAP net income came in at $7.35 billion; non-GAAP net income reached $10.19 billion. Adjusted EBITDA was $13.1 billion, representing a 68% margin that most enterprise software companies would envy. Free cash flow was $8.01 billion, or 41% of total revenue.

But the number investors actually cared about was AI revenue: $8.4 billion, driven by custom AI accelerators and AI networking, up 106% from Q1 2025. That's not outperformance in the conventional sense. That's a category change. Broadcom stock rose 5% in after-hours trading — a significant move for a company already trading at a $1 trillion-plus market cap.

The Q2 guidance was equally striking: total revenue of $22 billion, which would represent 47% growth year-over-year, with AI chip revenue specifically expected to reach $10.7 billion. At that run rate, Broadcom is on a trajectory toward $40 billion or more in annual AI chip revenue — and Tan says the ceiling is far higher than that.

What "Line of Sight to $100 Billion" Actually Means

In decades of covering semiconductor earnings, analysts rarely hear a CEO claim visibility to 10x revenue growth in a single category within a two-year window. Tan's "$100 billion in AI chip revenue in 2027" claim is extraordinary — and it's grounded in something concrete: long-term supply agreements with the largest technology companies in the world.

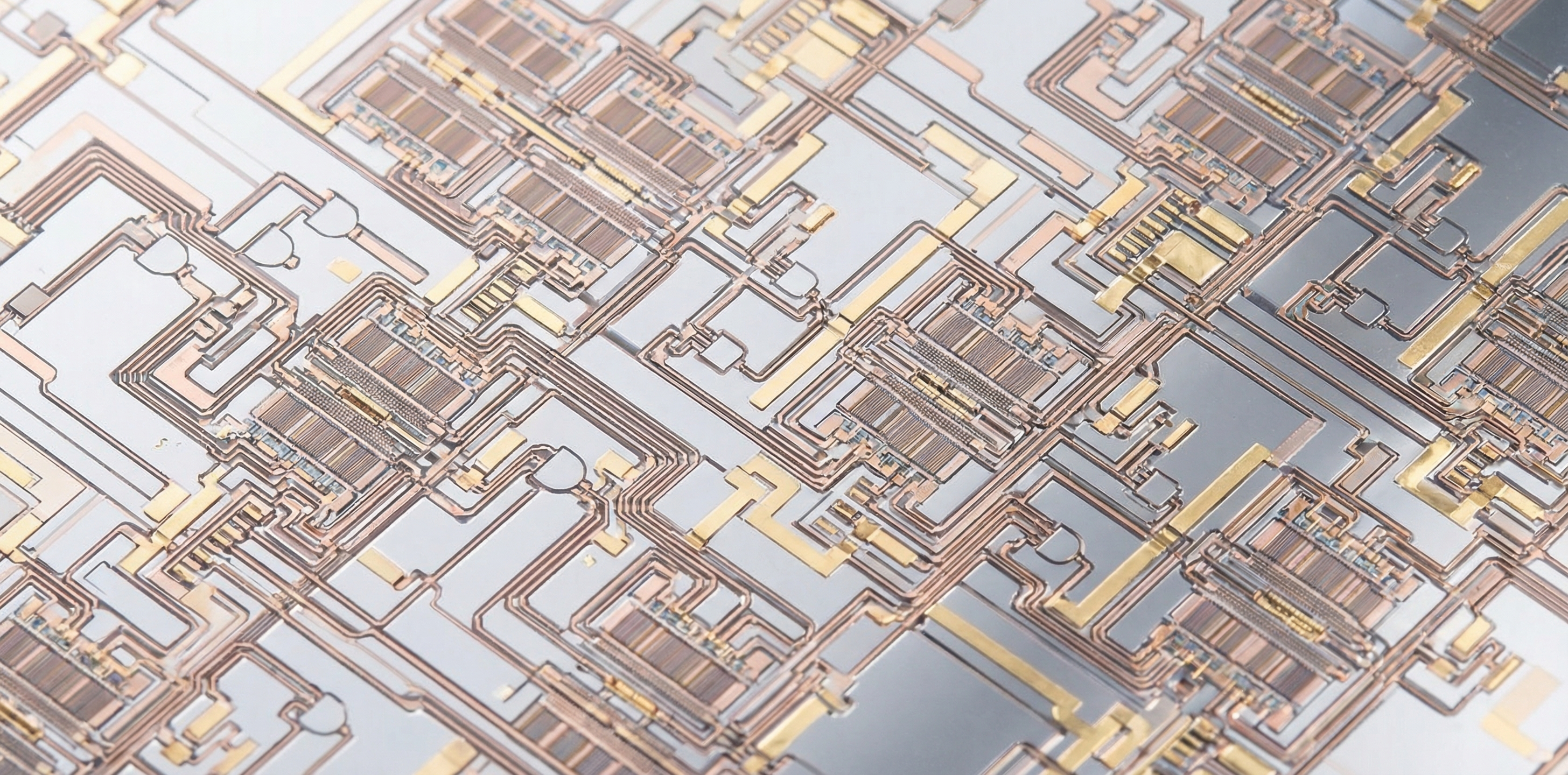

Broadcom's business model is not the same as Nvidia's. Where Nvidia sells standardized GPUs optimized for broad AI workloads, Broadcom designs and delivers custom AI accelerators — what the industry calls XPUs or ASICs — that are tailored specifically to the inference and training pipelines of individual hyperscalers. Amazon, Google, Meta, and Microsoft all design their own chips; Broadcom translates those designs into manufacturable silicon, provides the intellectual property stack, and coordinates with foundries like TSMC to bring them to life.

This model creates an unusual revenue characteristic: it is sticky, lumpy, and predictable in a way that commodity chip sales are not. When a hyperscaler commits to a custom ASIC program, they're committing to years of co-development, tooling investment, and production forecasting. The $100 billion figure isn't a revenue projection based on market share assumptions — it's derived from a known set of customer commitments that Broadcom has already secured and partially announced.

Why Hyperscalers Are Building Their Own Silicon — and Why They Need Broadcom

The shift from merchant silicon (buying chips off the shelf) to custom silicon has been building for a decade, but the generative AI era accelerated it dramatically. Training and running large language models at scale requires not just raw compute but specific memory bandwidth configurations, interconnect topologies, and power budgets that general-purpose GPUs are not optimized for. A model like Google's Gemini Ultra or Meta's LLaMA family benefits enormously from silicon designed around its architecture — not silicon designed to be good-enough for everyone.

Google's TPU program, which Broadcom has worked on for years, is the longest-running proof of concept. Meta's MTIA (Meta Training and Inference Accelerator) program is newer but moving fast. Amazon's Trainium and Inferentia chips, also developed in collaboration with Broadcom, are deeply integrated into AWS's AI services stack. Microsoft's Maia program is the newest entrant. These are not research projects — they are production workloads running at a scale that makes custom silicon economics not just attractive but necessary.

The custom chip approach also gives hyperscalers something strategic that Nvidia cannot offer: independence. AMD and Meta's recent 6-gigawatt custom chip partnership illustrates how aggressively the industry is pursuing alternatives to Nvidia's dominance. But even with AMD as a second supplier, the core infrastructure for designing, validating, and manufacturing those custom chips runs through Broadcom's expertise and IP portfolio.

The AI Networking Play: Often Overlooked, Strategically Critical

When Tan refers to "custom AI accelerators and AI networking" in the same breath, the networking component deserves attention. Broadcom is the dominant provider of Ethernet switching silicon for data centers — specifically, its Tomahawk and Jericho product families form the backbone of most large-scale AI training clusters. As AI clusters scale from thousands to hundreds of thousands of accelerators, the network fabric connecting them becomes a primary performance constraint and a primary cost driver.

The company's position in AI networking is directly analogous to its position in custom accelerators: it sells differentiated, high-margin silicon to customers who have no alternative at scale. Tomahawk 6, Broadcom's current flagship switch ASIC, supports 102.4 Tbps of throughput per chip — a spec that matters when you're building a 100,000-GPU training cluster. No merchant silicon alternative currently comes close.

Together, custom accelerators and networking silicon create a coherent flywheel: as hyperscalers build larger AI clusters, they need more Broadcom accelerators and more Broadcom networking chips. Both lines grow with AI infrastructure investment, and both carry margins that reflect their strategic necessity.

VMware: The Software Moat That Funds the Chip War

Broadcom's Q1 infrastructure software segment, which includes VMware and other enterprise software acquired over the past decade, generated $6.80 billion — slightly below consensus estimates of $7.02 billion. Tan acknowledged the miss but pushed back on investor concern about software disruption from AI, stating directly: "Our infrastructure software is not disrupted by AI."

The VMware business warrants scrutiny here. Broadcom has been aggressively converting VMware's customer base from perpetual licenses to subscription contracts since the 2023 acquisition — a strategy that has generated cash but also friction with enterprise customers facing substantially higher costs. The $6.80 billion figure suggests the conversion is still working, even if the pace has moderated. More importantly, that software revenue — which carries high margins and requires minimal capital expenditure — provides the financial stability that funds Broadcom's capital-intensive custom chip programs.

The $10 billion share buyback authorized by Broadcom's board, on top of the $7.8 billion already repurchased in Q1 and $3.1 billion in dividends paid, reflects management's confidence that cash generation will remain robust even as AI chip investment scales. Broadcom returned $10.9 billion to shareholders in a single quarter while continuing to fund its engineering programs — a capital allocation profile that few technology companies can match.

The $22 Billion Quarter Ahead — and What It Signals

Broadcom's Q2 guidance of $22 billion in revenue — 47% above Q2 2025 — is the kind of acceleration that resets analyst models. At $10.7 billion in AI chip revenue alone, Q2 would be larger than Broadcom's entire semiconductor solutions segment was just six quarters ago. The 68% adjusted EBITDA margin guidance is equally remarkable: Broadcom is scaling revenue at a rate that suggests the business is not being stretched to meet demand — it was already positioned for this moment.

The Q2 guidance also reinforces the $100 billion trajectory. If AI chip revenue grows from $8.4 billion in Q1 to $10.7 billion in Q2, the annualized rate for the first half of FY2026 alone approaches $38 billion. To reach $100 billion in calendar 2027, Broadcom would need the growth rate to continue — but notably, it would not need to accelerate significantly. The hyperscaler capex commitments that are already visible suggest that is precisely what Tan is counting on.

The Broader Implications for AI Hardware

Broadcom's results don't just tell us about Broadcom. They tell us about the rate of AI infrastructure investment being made by the largest technology companies in the world — companies that collectively plan to spend hundreds of billions on AI-capable hardware over the next two years.

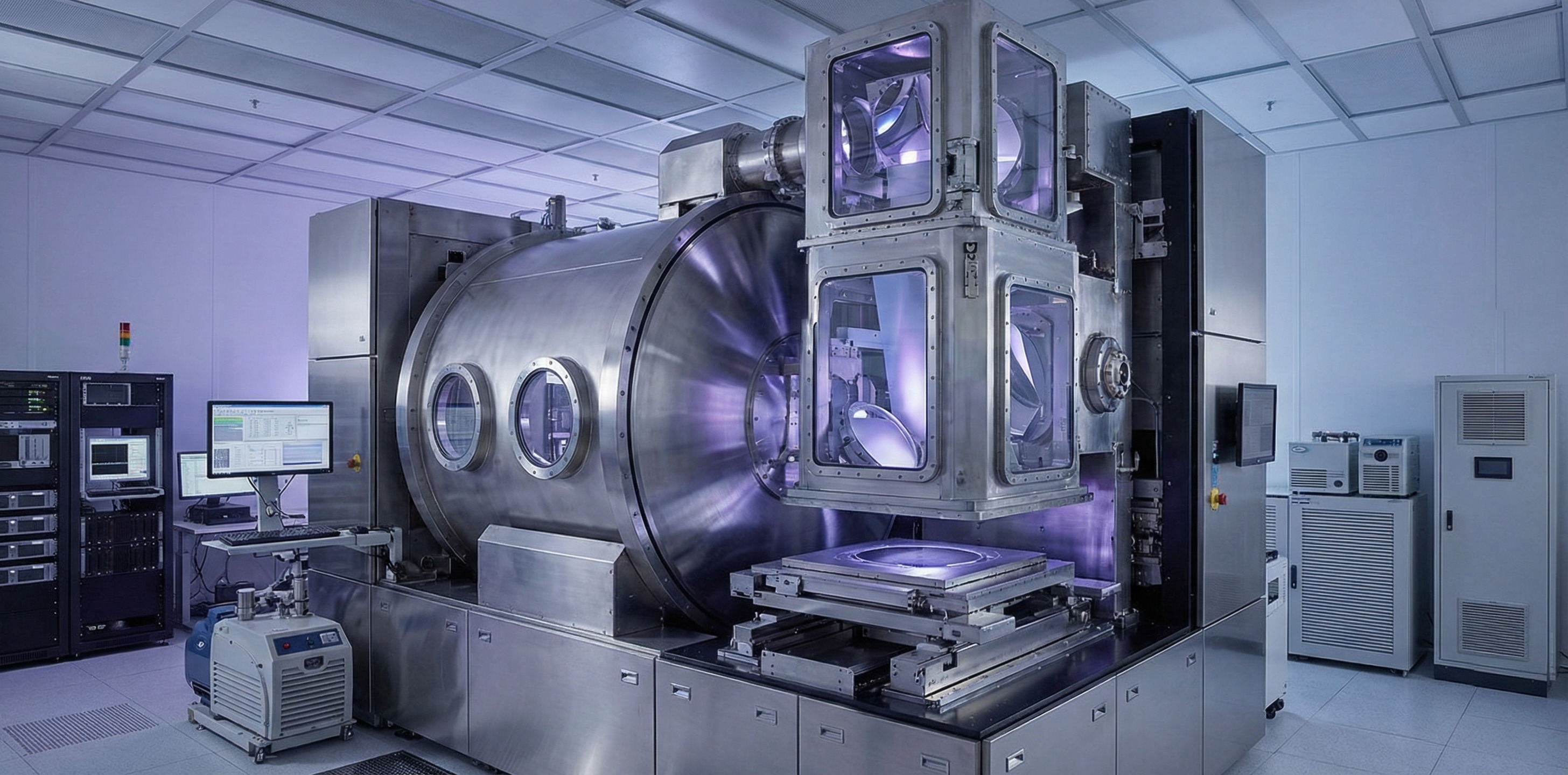

For every dollar of custom silicon revenue Broadcom reports, there are corresponding investments in TSMC wafer capacity, ASML lithography equipment, HBM memory from SK Hynix and Samsung, and data center real estate to house the resulting systems. The $8.4 billion number is a downstream signal of upstream commitments that stretch back 12 to 24 months — which means the AI infrastructure wave that produced Q1's results was locked in well before most of the current market debate about AI's economic return had begun.

The question this earnings report raises isn't whether AI infrastructure investment is real. It clearly is. The question is whether the economic returns that justify it will materialize at the pace the capital is being committed. On that score, Broadcom — which profits regardless of whether AI models ultimately deliver their promised productivity gains — has the best seat in the industry to watch the answer emerge.