When NVIDIA unveiled the Vera Rubin platform at CES 2026, most headlines focused on a single number: 10x better inference than Blackwell. That framing misses the point entirely. The Vera Rubin NVL72 isn't a faster GPU. It's a new category of machine — a rack-scale AI supercomputer defined by extreme co-design across six chips, built from the ground up for an era when data centers don't serve compute, they produce intelligence at industrial scale.

With H2 2026 deployments now confirmed at AWS, Google Cloud, Microsoft Azure, CoreWeave, and a dozen more partners, the Rubin platform is about to reshape the economics, architecture, and competitive map of global AI infrastructure. Here's an in-depth look at what it actually is, how it works, and why it matters far beyond the benchmark sheets.

The Premise: From Servers to AI Factories

NVIDIA's internal framing for Rubin begins not with a GPU spec, but with a fundamental claim about the nature of AI workloads in 2026. Traditional data centers were built around discrete requests — a web query, a database lookup, a rendering job. Each is stateless, bounded, and forgettable once served. Modern AI workloads, by contrast, are continuous reasoning machines. An agentic AI system running a multi-step business analysis isn't handling one request; it's maintaining a long-context "thought chain" across thousands of sequential inference steps, each one consuming and producing context state that must persist across GPU execution windows.

This shift breaks the traditional server model in three ways:

- Context management: A 500,000-token context window can occupy more memory than an entire Blackwell server has available. Inference state cannot live on GPU HBM alone.

- Interconnect saturation: MoE (Mixture-of-Experts) model inference requires all-to-all GPU communication patterns that bottleneck on traditional InfiniBand or Ethernet at scale.

- Power predictability: AI factories running always-on inference workloads need deterministic power delivery and thermal management — not the bursty, interruptible profiles of traditional HPC clusters.

NVIDIA's answer is to redefine the unit of compute. With Rubin, the rack — not the GPU server — is the product. Every design decision flows from that premise.

Six Chips, One Machine: The Architecture

The Vera Rubin NVL72 integrates six distinct NVIDIA-designed chips into a single coherent platform. This isn't a GPU plus some networking accessories — it's a co-designed system where every component was architected to eliminate the bottlenecks that held Blackwell back at the frontier scale.

The Rubin GPU: 288GB HBM4, 3.6 TB/s Per-GPU Bandwidth

The Rubin GPU itself doubles Blackwell's per-GPU memory bandwidth. Each GPU carries 288GB of HBM4 memory with 3.6 terabytes per second of bandwidth — nearly double Blackwell's HBM3e specification. The Transformer Engine, now in its fourth generation, handles NVFP4 precision arithmetic for inference, enabling the platform's headline 3.6 exaFLOPS of NVFP4 inference performance per NVL72 rack across 72 GPUs.

But raw FLOPS tell an incomplete story. HBM4's bandwidth improvement is arguably more consequential. Modern large language model inference is memory-bandwidth-bound, not compute-bound — the GPU spends most of its time moving data between HBM and compute cores, not actually performing multiplications. Doubling bandwidth means doubling the practical inference throughput ceiling before compute becomes the constraint.

The Vera CPU: ARM-Based With Spatial Multi-Threading

The Vera CPU is NVIDIA's first custom ARM-based processor for the data center, built specifically to serve as an intelligent orchestration layer for the Rubin GPUs. Each NVL72 rack contains 36 Vera CPUs (one paired with each pair of GPUs, in a 2-GPU Vera Rubin Superchip configuration). Each Vera CPU features 88 "Olympus" ARM cores with Spatial Multi-Threading — NVIDIA's take on SMT that optimizes thread scheduling for the irregular, latency-sensitive workloads characteristic of agentic AI inference pipelines.

The Vera CPU also controls 128GB of GDDR7 memory per chip, providing a fast intermediate cache tier between GPU HBM4 and the new ICMS storage layer. Across 36 CPUs in an NVL72 rack, that totals 54TB of LPDDR5x equivalent capacity — a substantial intermediate memory pool that dramatically reduces the frequency with which inference state must be evicted to slower storage.

NVLink 6: 260 TB/s of Scale-Up Bandwidth

The networking story is where Rubin makes its most dramatic departure from prior platforms. NVLink 6 delivers 3.6 TB/s of all-to-all, scale-up bandwidth per GPU. Across the full 72-GPU NVL72 rack, that yields 260 TB/s of scale-up networking bandwidth — a figure NVIDIA notes exceeds the total cross-sectional bandwidth of the global internet.

This matters enormously for MoE model inference. In a Mixture-of-Experts architecture, any given token's processing requires activating a subset of expert networks that may be distributed across different GPUs. Each inference step triggers potentially dozens of GPU-to-GPU transfers. At Blackwell-era bandwidth, this becomes a latency wall at large scale. NVLink 6's 2x bandwidth increase over NVLink 5 — combined with lower latency signaling — eliminates this bottleneck for all currently-anticipated model sizes.

NVIDIA Inference Context Memory Storage (ICMS): The New Infrastructure Tier

Perhaps the most architecturally novel element of the Rubin platform is ICMS — the NVIDIA Inference Context Memory Storage system, powered by the BlueField-4 DPU. ICMS represents a new memory tier specifically designed for agentic AI's key challenge: inference state that outlives a single GPU execution window.

Traditional KV cache management forces AI systems to evict context when GPU HBM fills up, requiring costly recomputation on reloads. ICMS creates a high-speed, AI-native storage tier between GPU HBM and conventional DRAM or NVMe, managed intelligently by the BlueField-4 DPU. This allows inference context — the accumulated "memory" of an ongoing agentic reasoning chain — to persist across execution windows without expensive recomputation penalties.

For enterprise customers running agentic AI workflows (automated research agents, multi-step coding assistants, persistent customer service bots), ICMS is potentially the single feature that enables the next class of applications. The ability to maintain a coherent 500,000-token context across hours of inference without recomputation is the difference between a capable assistant and a genuinely reasoning system.

Spectrum-X Ethernet Photonics: Co-Packaged Optics at Scale

For scale-out networking between NVL72 racks, NVIDIA is introducing the Spectrum-X Ethernet Photonics switch — the world's first co-packaged optical switch system optimized for AI factory scale. Built on Spectrum-6 architecture, it delivers 5x better power efficiency and 5x longer uptime versus conventional Ethernet switches, while scaling to the hundreds of thousands of GPUs required for frontier model training runs.

The co-packaged optics approach solves a thermal and signal integrity problem that has plagued hyperscale networking: at very high port densities, traditional pluggable transceivers burn more power and introduce more latency than co-packaged alternatives. By integrating the optical interface directly on the switch ASIC package, Spectrum-X Photonics eliminates the electrical-to-optical conversion bottleneck and dramatically reduces the power budget per terabit of networking bandwidth.

ConnectX-9 SuperNIC: The AI-Optimized Network Adapter

Rounding out the six-chip platform is the ConnectX-9 SuperNIC, NVIDIA's new network adapter designed specifically for AI factory traffic patterns. Where conventional NICs optimize for throughput on large, sequential transfers, the ConnectX-9 targets the small-message, all-to-all collective communication patterns that dominate distributed AI training and inference. Combined with the Spectrum-X switch fabric and BlueField-4 DPU, it completes a vertically integrated networking stack purpose-built for intelligence production.

System Performance: Numbers That Require Context

The NVL72 rack-level specifications are striking in isolation; they become genuinely significant when benchmarked against real deployment economics:

- 3.6 exaFLOPS NVFP4 inference per rack — versus Blackwell NVL72's roughly 720 petaFLOPS (5x improvement)

- 2.5 exaFLOPS NVFP4 training per rack

- 20.7TB HBM4 capacity per rack with 1.6 PB/s of aggregate HBM bandwidth

- 54TB LPDDR5x (via Vera CPU) for intermediate context storage

- 10x lower inference token cost vs. Blackwell

- 4x fewer GPUs required to train equivalent MoE models

The "10x lower token cost" and "4x fewer GPUs for training" figures deserve particular attention. These aren't benchmarks on artificial workloads — they represent the practical cost-per-unit-intelligence metric that AI companies track to measure infrastructure ROI. For a hyperscaler running inference at the scale of billions of daily user queries, a 10x reduction in token cost isn't an incremental improvement. It's the difference between marginal profitability and structural competitiveness.

Similarly, training frontier MoE models currently requires thousands of GPUs over weeks of continuous computation. A 4x reduction in GPU count for equivalent training runs means the difference between a $200M training run and a $50M one — making frontier model training accessible to a significantly wider set of players.

The Deployment Race: Who Gets Rubin First

NVIDIA confirmed production in January 2026, with commercial deployment beginning in H2 2026. The confirmed partner list reads like a rollcall of global AI infrastructure:

Cloud Service Providers: AWS, Google Cloud, Microsoft Azure, and Oracle Cloud Infrastructure (OCI) are all committed to deploying Rubin-based instances. Microsoft's deployment is particularly notable: Azure is integrating Vera Rubin NVL72 racks across its next-generation Fairwater AI superfactory sites in Wisconsin and Atlanta, with architectural planning already complete to scale to hundreds of thousands of Rubin GPUs.

Specialty AI Cloud: CoreWeave will be among the first to offer commercial Rubin instances, operated through its CoreWeave Mission Control platform. Lambda, Nebius, and Nscale are also confirmed early deployers.

AI Labs: Anthropic, OpenAI, Meta, Mistral AI, and Perplexity are all listed among the labs expected to run Rubin infrastructure for training and inference. This matters not just for raw capacity, but for the competitive implications — the lab that gets operational on Rubin first gains a structural advantage in both training larger models and serving them cheaply.

Enterprise Hardware: Dell Technologies, HPE, Lenovo, and Supermicro are building Rubin-based server systems for enterprise deployment, targeting organizations that want to run AI inference on-premises rather than in the cloud.

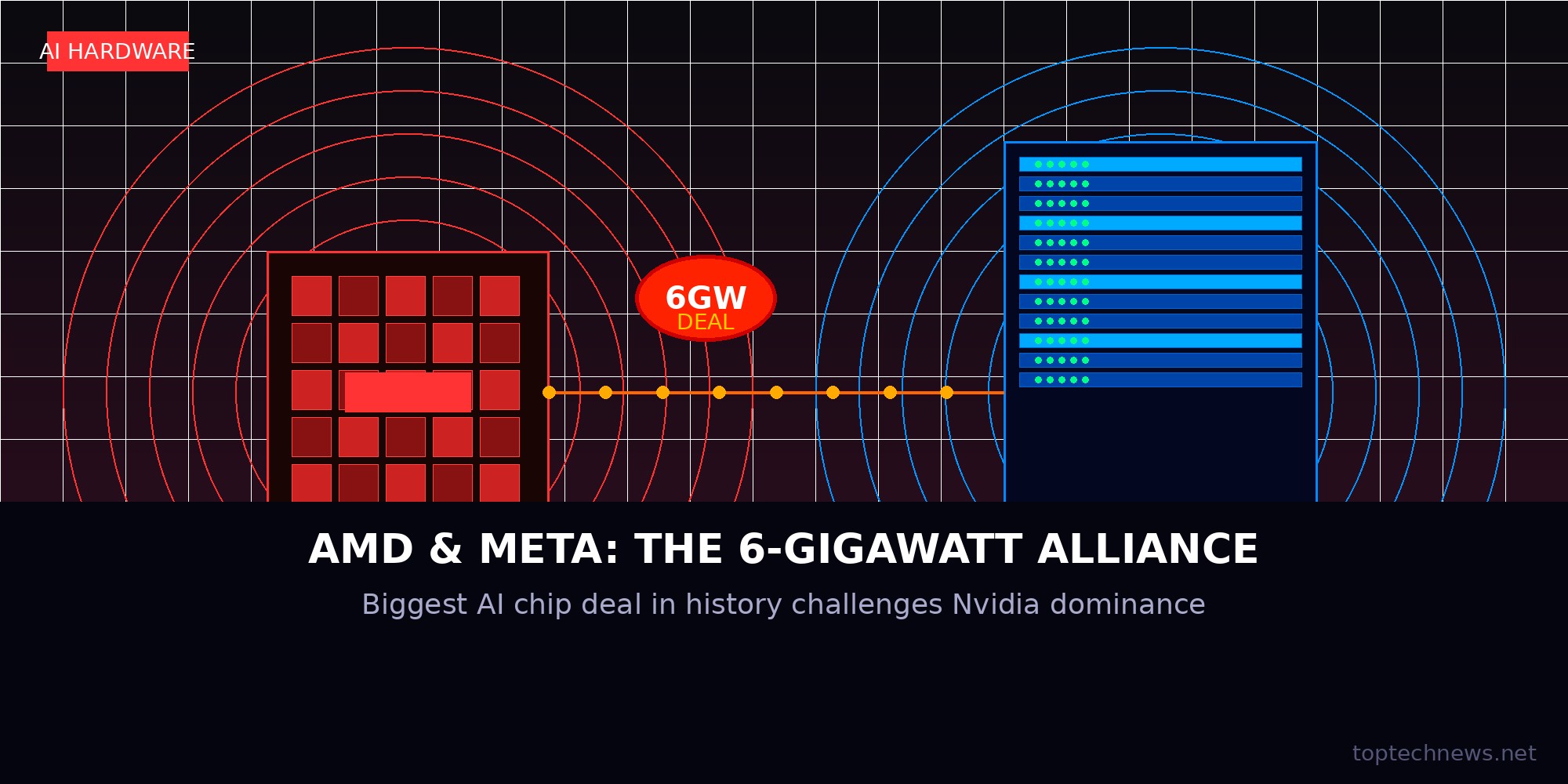

The Competitive Landscape: Rubin vs. the Alternative Universe

The Vera Rubin announcement lands in a moment of genuinely elevated competition for NVIDIA. AMD's MI450 (with custom silicon deals, most recently the reported AMD-Meta 6GW procurement agreement) and Google's TPU v7 are both viable alternatives for specific workloads. Intel's Gaudi line continues to find enterprise niches. And Groq's LPU inference architecture — reportedly being integrated into NVIDIA's own Feynman inference chip announced for GTC 2026 — demonstrates that purpose-built inference silicon can outperform general-purpose GPUs on latency-sensitive tasks.

Yet none of these alternatives match Rubin's integrated platform story. AMD's GPUs remain dependent on third-party networking. Google's TPUs are captive to Google Cloud. Groq's LPUs excel at specific inference patterns but lack training capability. NVIDIA's deepest competitive moat isn't any individual chip — it's the CUDA software ecosystem, the full-stack co-design from GPU to switch, and the fact that every major cloud now has Rubin deployments committed, meaning developers can code to Rubin on day one of availability.

The more interesting competitive question is whether the Rubin platform's economic improvements accelerate or decelerate the custom silicon trend among hyperscalers. If AWS Trainium 3 and Google TPU v7 can match Rubin's token cost at 10x improvement — using chips that AWS and Google control and don't pay NVIDIA royalties for — they might choose not to deploy Rubin at scale despite their public commitments. The answer will play out over 2026 and 2027, and will likely determine whether NVIDIA's data center revenue sustains at Blackwell-era levels or begins a gradual share erosion.

What Rubin Means for the Data Center Build-Out

Rubin's power envelope and infrastructure requirements are substantially more demanding than Blackwell. Each NVL72 rack is expected to draw between 120–130kW of power — compared to roughly 100kW for Blackwell NVL72 configurations. At scale, this is not a modest increment. A 100,000-GPU Rubin deployment (~1,400 NVL72 racks) would require roughly 175MW of dedicated power delivery — the output of a mid-sized power plant, running continuously.

This explains why Microsoft's Fairwater superfactories and the broader hyperscaler build-out are moving to gigawatt-scale campus planning. The AI hardware roadmap is now fundamentally constrained by power grid capacity, not chip supply. NVIDIA's Spectrum-X Photonics networking, with its 5x power efficiency advantage over conventional switches, provides meaningful relief — but at the scale of a 1GW AI superfactory, every watt counts in a planning exercise measured in hundreds of millions of dollars annually in power costs.

Liquid cooling, already mainstream for Blackwell, becomes essentially mandatory for Rubin deployments. Direct liquid cooling (DLC) at the rack level is the only thermal solution capable of handling 120kW+ heat densities with adequate reliability and power-usage effectiveness (PUE). This is driving a parallel infrastructure boom in liquid cooling technology, immersion cooling systems, and the specialized data center facility design required to support them.

The Software Question: Making Rubin Programmable

NVIDIA's hardware lead is meaningless without software that makes Rubin easy to deploy and program. The platform ships with full CUDA 14 support, updated CUDA-X libraries including cuDNN, cuBLAS, and NCCL, and framework integrations for PyTorch 3.0 and JAX. Red Hat has confirmed a partnership to deliver a complete AI stack optimized for Rubin, including Red Hat Enterprise Linux, OpenShift container orchestration, and Red Hat AI frameworks — targeting enterprise customers who need a supported, certified deployment path.

The new ICMS memory tier requires developer visibility through NVIDIA's updated memory management APIs, but NVIDIA has emphasized backward compatibility: existing Blackwell workloads run on Rubin without modification, with ICMS providing transparent performance improvements for context-intensive inference applications. This is a deliberate strategy to avoid fragmenting the CUDA ecosystem — Rubin's performance improvements are meant to materialize as an automatic upgrade, not as a rewrite requirement.

The Strategic Verdict: More Than a GPU Generation

It's worth stepping back to appreciate the magnitude of what NVIDIA has built. The Vera Rubin NVL72 represents five years of concurrent silicon development across six separate chip programs, coordinated to ship as a single integrated platform. The NVLink 6 switch fabric alone required reengineering NVIDIA's entire scale-up networking architecture. The Vera CPU is a first-generation custom ARM processor competing against Apple Silicon, AMD's EPYC, and Intel's Xeon in a market where NVIDIA had no prior presence. The BlueField-4 DPU with ICMS is a novel memory architecture tier that didn't exist in any commercial product two years ago.

That breadth of simultaneous execution is itself a competitive moat. AMD, Intel, or any other hardware company challenging NVIDIA on a single dimension — GPU compute, networking, or CPUs separately — faces a platform that has already solved the integration problem. Rubin's 10x token cost improvement isn't achieved by any single chip being 10x faster; it emerges from eliminating the bottlenecks at every interface between compute, memory, networking, and storage simultaneously. That's an integration achievement, not just a process node jump.

As the H2 2026 deployment window approaches and the first Rubin racks go live at Fairwater, CoreWeave Mission Control, and AWS regions worldwide, the AI industry will begin to answer the questions that spec sheets can't: Does Rubin's real-world token cost hold up under production inference loads? Can the ICMS memory tier handle the context persistence demands of frontier agentic workflows? And — most importantly — does 10x cheaper inference unlock a new class of AI applications that current economics make prohibitively expensive?

The answers will define the competitive landscape of AI infrastructure through 2027 and beyond. The race to build out AI factories around the Vera Rubin NVL72 has already begun.