Meta has just done something the semiconductor industry rarely does: unveiled four chip generations in a single announcement and promised to deliver all of them within two years. The Meta Training and Inference Accelerator family — MTIA 300, 400, 450, and 500 — represents the most aggressive custom silicon roadmap any social media company has ever attempted. And it arrives just weeks after Meta signed some of the largest GPU procurement deals in history with Nvidia and AMD. Far from contradiction, the dual strategy reveals a company methodically engineering its way out of total dependence on third-party silicon — while hedging every bet in the meantime.

A Chip Family, Not a Chip

The MTIA program has been quietly evolving since 2023, when Meta first publicly disclosed its in-house chip efforts. A second-generation model followed in 2024 with a modest 90-watt thermal footprint, focused narrowly on ranking and recommendation workloads — the neural networks that decide what you see in your Facebook and Instagram feeds.

What Meta announced on Wednesday is a different order of magnitude. According to a company blog post and Meta AI technical blog, the four new chips represent a deliberate "portfolio approach" to silicon — each chip purpose-built for a different point on the AI workload spectrum.

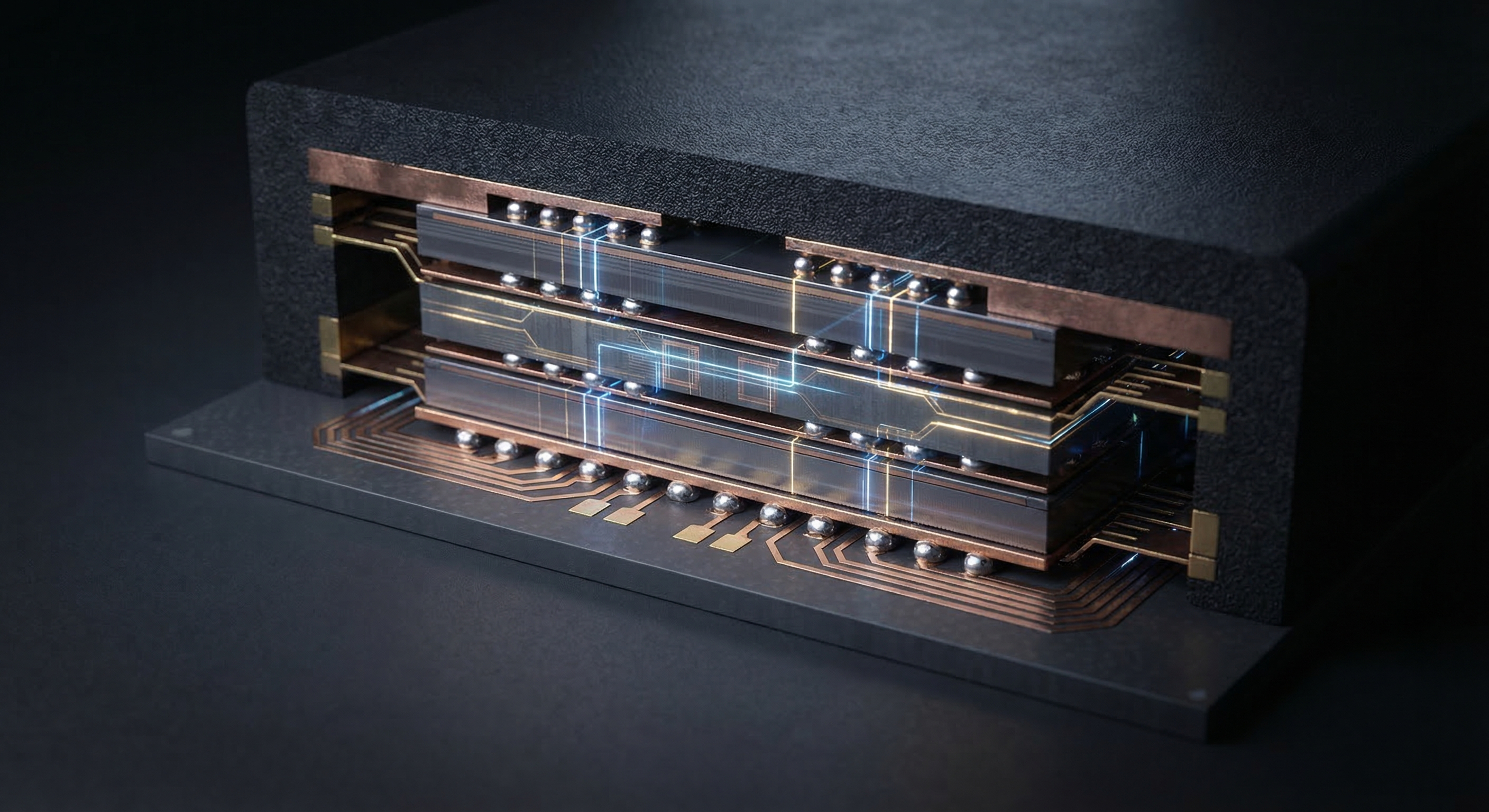

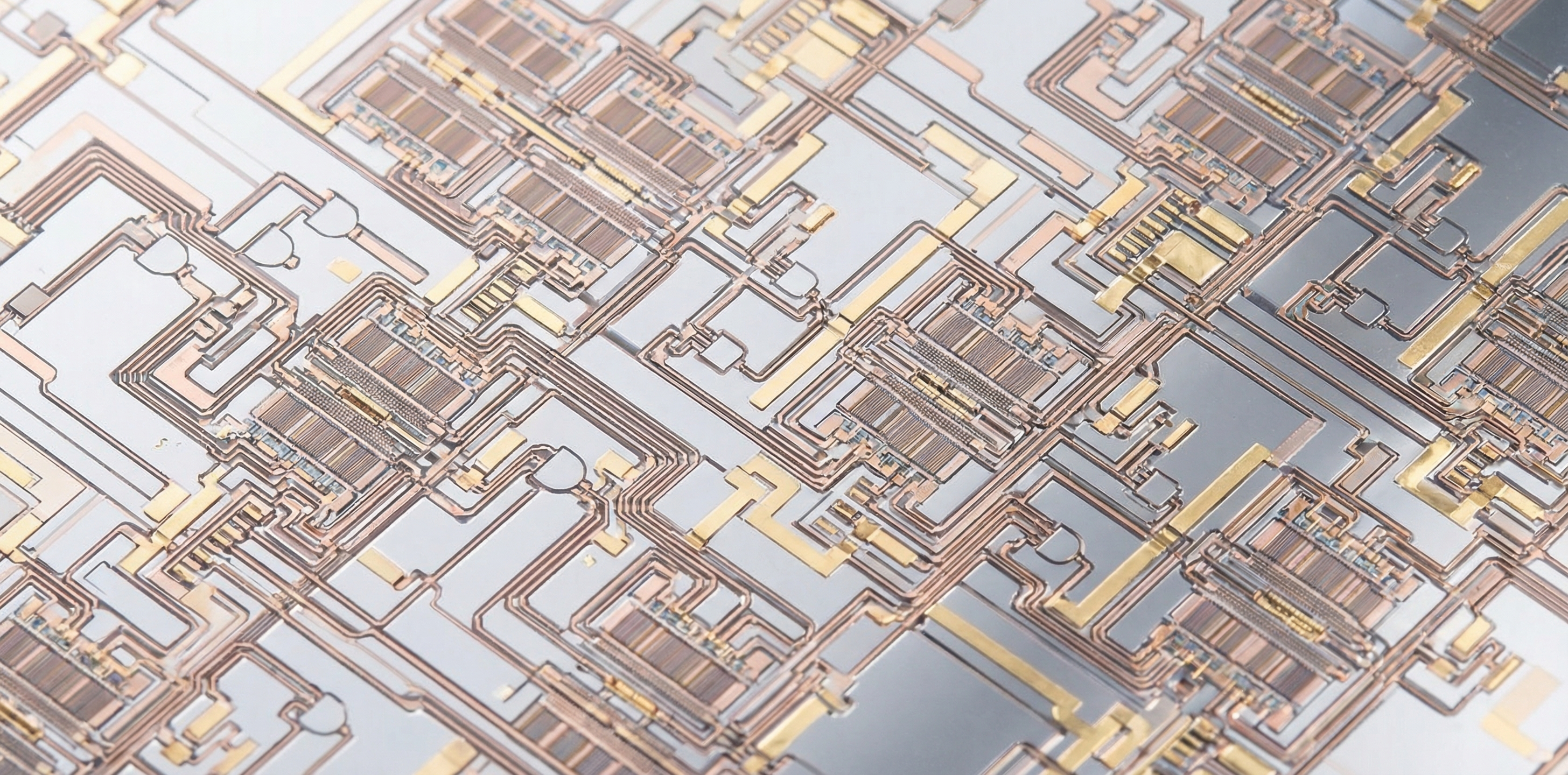

The MTIA 300, already in production, is the evolutionary successor to its 2024 predecessor. It handles the same ranking and recommendation training workloads but at dramatically greater scale: 1.2 petaflops of performance in the MX8 data format and 216 gigabytes of high-bandwidth memory (HBM). Its architecture combines one compute chiplet, two network chiplets, and multiple HBM stacks — a modular design philosophy that runs throughout the entire family.

The MTIA 400 marks the pivot toward generative AI. Completed testing and on the path to production deployment in Meta's data centers, the MTIA 400 is configured for GenAI inference tasks — the moment an AI model responds to a user request, whether that's generating an image, answering a question, or producing a video from a text prompt. A single Meta data center rack will house 72 MTIA 400 chips, all working in concert on inference jobs that currently rely heavily on Nvidia GPUs.

The MTIA 450 and MTIA 500 are slated for 2027 and sit at the top of the roadmap. Both are optimized specifically for generative AI inference, with architectural innovations that push their performance far beyond anything in the current MTIA lineup.

The MTIA 500: A 10-Petaflop Inference Engine

The MTIA 500's specifications put it in a different league from its predecessors. According to SiliconAngle's technical breakdown, it delivers 10 petaflops of performance in the MX8 format — more than eight times the MTIA 300's 1.2 petaflops. Its thermal design point reaches 1,700 watts, compared to just 90 watts for the 2024 MTIA 200. That 18-fold increase in power budget in roughly two years is a direct measure of how much more computational work Meta expects each chip to perform.

Memory capacity expands equally dramatically. Where the MTIA 300 carries 216GB of HBM, the MTIA 500 packs 516 gigabytes — more than twice as much. This matters enormously for generative AI inference: large language models and image generators require holding vast parameter sets in fast-access memory to respond at usable speeds. Cramming 516GB of HBM onto a single accelerator means fewer chips needed per inference job, and lower latency for end users.

The MTIA 500 also supports a more efficient data format called MX4, which reduces the number of bytes processed per AI operation, effectively squeezing more inferences per watt. It runs four logic chiplets alongside a dedicated system-on-chip (SoC) module that manages data transfer between the accelerator and the host server. Hardware acceleration for FlashAttention — the memory-efficient implementation of the core mathematical operation in transformer AI models — is baked directly into both MTIA 450 and 500, reducing the compute overhead for one of the most resource-intensive elements of modern AI inference.

Inference-First: A Strategic Bet Against the GPU Orthodoxy

The most revealing aspect of Meta's MTIA strategy isn't the raw specs — it's the design philosophy. Most commercial AI accelerators, including Nvidia's H100 and Blackwell architectures, are built primarily to handle the most demanding workload in AI: pre-training large language models from scratch. Inference performance is then derived from those same chips, even when it's not what they were optimized for.

Meta is deliberately inverting that hierarchy. As the company's official announcement states: "MTIA 450 and 500 are optimized first for GenAI inference, and they can then be used to support other workloads as needed." This is not a subtle difference. An inference-first design can achieve significantly better performance-per-watt and cost-per-query for the workloads that dominate production AI deployments — the billions of daily requests from users of Meta's apps.

Yee Jiun Song, Meta's VP of Engineering, told CNBC that the company's pace of chip development — one new generation every six months or less — is "unusual for any silicon company." The driver, he said, is the sheer velocity of Meta's current infrastructure buildout: "We find ourselves building out capacity so quickly at the moment, and spending so much on CapEx, that at any given time we want to have the state-of-the-art chip to deploy."

The modular architecture enables this pace. Because all MTIA 400, 450, and 500 chips share the same chassis, rack, and network infrastructure, a new chip generation can drop into the existing physical footprint without requiring facility redesigns — dramatically reducing the time from tape-out to production deployment.

The HBM Problem No One Can Ignore

There is, however, a significant constraint shadowing Meta's aggressive silicon roadmap: high-bandwidth memory supply. HBM chips — the specialized stacked memory that makes AI accelerators so fast — are produced by only three companies at scale: Samsung, SK Hynix, and Micron. The global AI hardware boom has made HBM one of the most contested resources in the entire technology supply chain.

Song was unusually candid about the risk. "We're absolutely worried about HBM supply," he told CNBC. He declined to specify whether Meta has signed long-term contracts with memory vendors, saying only that the company has "secured our supply for what we're planning to build out" and has taken a "diversified" approach.

The concern is well-founded. As TTN has previously reported, Nvidia has already locked Samsung and SK Hynix into preferred HBM4 supply agreements for its Vera Rubin platform, effectively squeezing out Micron from the leading edge. Every major hyperscaler running parallel custom silicon programs — Google, Amazon, and now Meta — is competing for the same finite output from those same three fabs. As MTIA 450 and 500 require even more HBM per chip than MTIA 300, Meta's consumption of this constrained resource will rise sharply.

Running Both Plays Simultaneously

The timing of Meta's MTIA announcement is deliberately striking. It comes less than a month after the company inked two of the largest AI hardware procurement deals ever recorded: an agreement to purchase millions of Nvidia GPUs and a separate commitment to deploy up to 6 gigawatts of AMD GPUs over multiple years. Reports also suggest Meta is exploring Google TPU deployments for large language model workloads.

Song addressed the apparent paradox directly. "The workloads are changing so quickly that we want to make sure that we have options." Custom MTIA chips are optimized for ranking, recommendation, and GenAI inference — the workloads Meta runs at the greatest scale and for which cost-per-query matters most. Nvidia and AMD GPUs remain essential for LLM training and for the most frontier GenAI workloads where MTIA hasn't yet demonstrated parity.

The deeper strategic logic is one of leverage. By credibly deploying its own silicon at scale, Meta gains negotiating power with external chip vendors. It also insulates itself from the supply crunches and price spikes that have made Nvidia's GPU allocation a source of competitive anxiety across the entire AI industry. "This also provides us with more diversity in terms of silicon supply, and insulates us from price changes to some extent," Song said. "This is a little bit more leverage."

The ASIC Race Enters Its Mature Phase

Meta's accelerated MTIA roadmap is a significant data point in a broader industry shift. Google began building Tensor Processing Units in 2015. Amazon followed with its first custom chip announcement in 2018. Both companies have used their ASICs primarily as components of their cloud computing platforms — tools that customers can access, generating revenue while simultaneously reducing GPU purchases.

Meta's use case is different: its MTIA chips are entirely internal, purpose-built to lower the cost of running its own products. This makes the economics cleaner to evaluate. Every inference served by an MTIA chip instead of a leased GPU is a direct cost reduction. At the scale Meta operates — hundreds of billions of feed ranking events and billions of generative AI interactions daily — even a modest efficiency advantage per chip translates into hundreds of millions of dollars annually.

Broadcom's recent $100 billion AI chip roadmap speaks to the same trend from the supply side: custom ASIC design revenue is accelerating as hyperscalers invest in purpose-built silicon. The custom chip ecosystem — from TSMC's advanced packaging capabilities to the Cadence and Synopsys EDA tools that make chiplet architectures possible — has matured enough that a company like Meta can now maintain a six-month chip release cadence. That would have been nearly impossible five years ago.

What This Means for Nvidia's Largest Customers

The strategic trajectory is clear, even if the short-term picture remains one of massive Nvidia dependency. Meta is among Nvidia's most important customers by revenue. The billions it is simultaneously committing to Nvidia GPUs and to its own silicon development are not in tension — they are the two halves of a long-term transition plan.

As inference workloads grow to dominate AI infrastructure spending — driven by consumer-facing AI products that require always-on, low-latency responses — the economics favor inference-optimized ASICs over general-purpose training GPUs. Meta's MTIA 450 and 500, arriving in 2027, are designed to capture an increasing share of that inference workload. The Nvidia and AMD contracts ensure the lights stay on while the transition unfolds.

The chip industry's broader concern is the pace of hyperscaler silicon programs. Nvidia's Vera Rubin roadmap, recently locked in via a gigawatt-scale deal with Thinking Machines Lab, offers five times the inference performance of Blackwell — a moving target that custom silicon programs must chase. Meta's six-month release cadence is its answer to that challenge: iterate fast enough that custom chips stay competitive with the best merchant silicon available, at a fraction of the cost per inference for Meta's specific workloads.

Whether Meta achieves that target on time — given the HBM supply constraints, the complexity of 2027 chiplet packaging, and the rapidly shifting AI workload landscape — is the central question hanging over an announcement that was, by every other measure, the most ambitious custom silicon roadmap a social media company has ever put on paper.