In a move that sent shockwaves through the semiconductor market, Nvidia has finalized its HBM4 memory supplier roster for the Vera Rubin AI accelerator platform — and Micron Technology didn't make the cut. Samsung and SK Hynix have been confirmed as the exclusive suppliers of sixth-generation high-bandwidth memory for Nvidia's flagship next-gen chips, according to a report citing the Korea Economic Daily published March 8. The selection crystallizes a hierarchy in the AI memory market that will define competitive positioning across the entire semiconductor industry for the next two to three years — arriving exactly as global tech spending on AI infrastructure approaches an unprecedented $650 billion in 2026.

What the Vera Rubin Vendor Confirmation Actually Means

The Korea Economic Daily's reporting, citing industry sources, confirmed that both Samsung Electronics and SK Hynix have been placed on Nvidia's approved vendor list for HBM4 memory destined for Vera Rubin — the successor to the Blackwell architecture. Micron, which has supplied HBM3E for Nvidia's existing platforms, does not appear on the Vera Rubin vendor list for the flagship tier.

Markets reacted sharply. Micron stock fell 6.74% on the news. Nvidia itself slid 3.01%. In a further signal of how tightly the HBM supply chain is scrutinized, Samsung's Korean-listed shares fell 7.81% and SK Hynix dropped 9.52% — a counterintuitive reaction reflecting investor concern that winning the contract comes with execution risk and margin pressure at extreme volume.

The supplier split is expected to heavily favor SK Hynix, which is projected to capture roughly 70% of Nvidia's HBM4 allocation for Vera Rubin, with Samsung taking the remaining 30%. That breakdown reflects both manufacturing capacity and qualification status: Samsung passed Nvidia's internal quality benchmarks for HBM4 at both 10 Gbps and 11 Gbps operating speeds. SK Hynix, currently optimizing its 11 Gbps variant, is targeting full-scale production ramp this month, per TechBriefly's analysis of the Korea Economic Daily report.

The Technical Bar Micron Couldn't Clear

Nvidia's HBM4 specifications for Vera Rubin push well beyond the JEDEC industry standard of 8 Gbps. The company required suppliers to qualify at 10 Gbps — the baseline for acceptance — and prioritized vendors capable of hitting 11 Gbps, a threshold that offers meaningful gains in memory bandwidth per stack. For a platform like Vera Rubin, where each GPU ships with 8 HBM4 stacks totaling 288 GB of memory capacity, that 11 Gbps target translates directly to competitive differentiation in training and inference throughput.

Industry sources quoted in the Korea Economic Daily were blunt about Micron's situation: "Micron isn't even being discussed as a Vera Rubin HBM4 supplier." Analysts attribute the exclusion primarily to difficulties meeting Nvidia's speed and thermal specifications, particularly around the base die design — the logic layer at the bottom of an HBM stack that handles memory controller functions. Getting this component right at extreme operating speeds has proven to be the crux of the qualification challenge.

It is not an outright defeat for Micron. The company is expected to supply HBM4 for Rubin CPX, Nvidia's mid-tier inference-focused accelerator in the broader Rubin lineup. Research firm TrendForce noted in February that Nvidia may eventually bring all three suppliers into its HBM4 ecosystem as aggregate supply tightens — but the Vera Rubin flagship cycle belongs to the Korean duo.

Vera Rubin's Architecture and the Memory Demands Behind It

Understanding why this memory supplier decision matters requires understanding the sheer scale of what Vera Rubin demands. Each Vera Rubin GPU incorporates 8 HBM4 stacks delivering 288 GB of on-package memory. The full Vera Rubin Superchip combines two GPUs into a single unit, yielding 576 GB of memory per Superchip. The enterprise rack configuration — the Vera Rubin NVL72 — packs 72 Rubin GPUs alongside 36 Vera CPUs and delivers roughly 10x the performance per watt versus Blackwell, according to Nvidia's product disclosures.

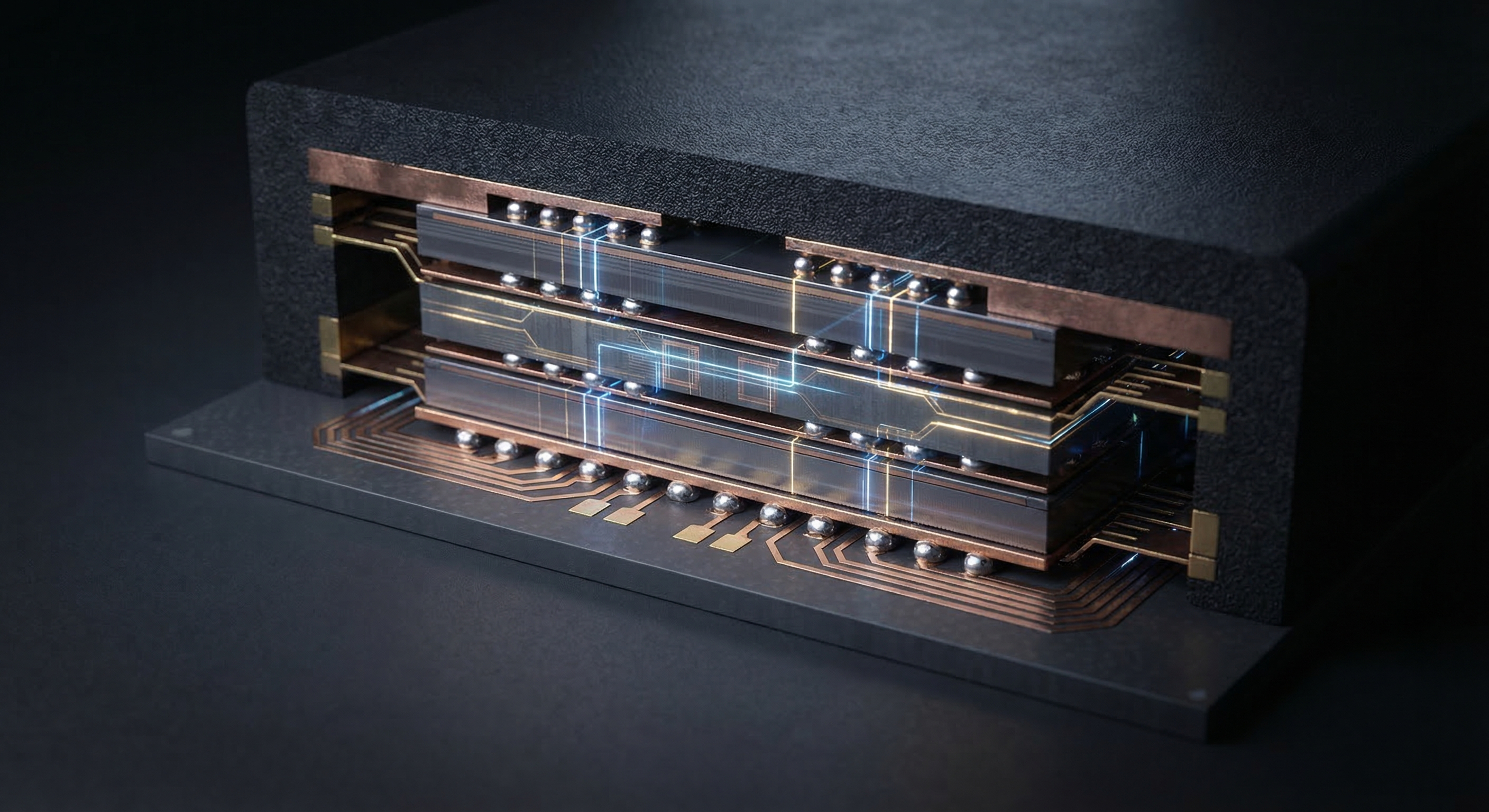

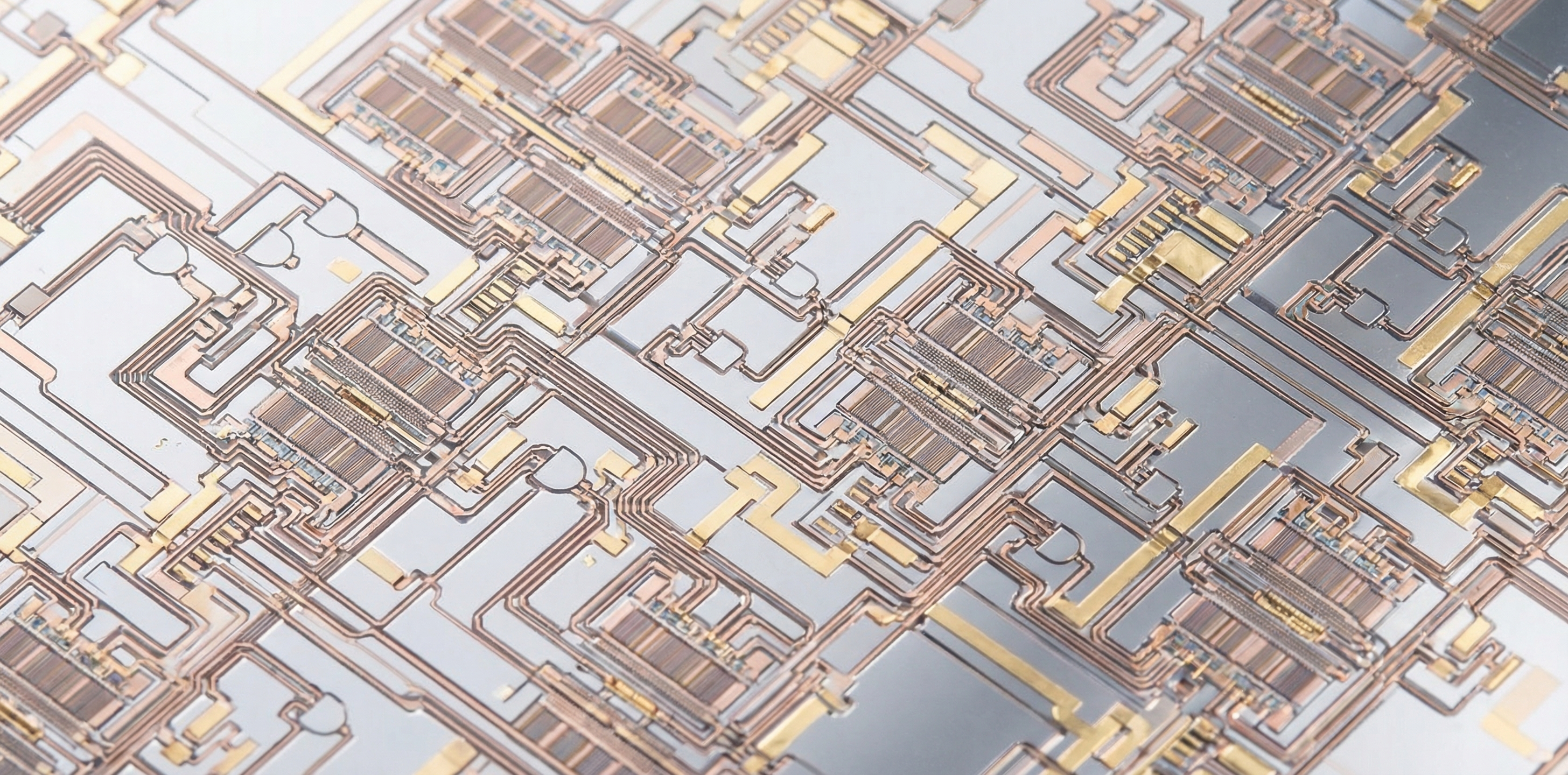

Hardware at this scale consumes HBM in staggering quantities. Each NVL72 rack requires 72 GPU's worth of HBM4 stacks — 576 individual memory stacks per rack, each one a precision-engineered assembly of DRAM dies bonded through thousands of microscopic through-silicon vias (TSVs). Producing these components at yield rates that satisfy Nvidia's quality threshold at the 11 Gbps speed grade is one of the most demanding semiconductor manufacturing challenges in production today.

Vera Rubin is scheduled for release in the second half of 2026 and will be formally unveiled at Nvidia's GTC developer conference on March 16 — less than a week away. The vendor list confirmation, timed just eight days before GTC, is effectively a strategic disclosure: Samsung and SK Hynix have won the initial ramp, and the market should price that accordingly.

Samsung's Redemption Cycle — and What It Cost

For Samsung, the Vera Rubin selection represents a meaningful rehabilitation. The company was largely shut out of the Blackwell supply chain after persistent HBM3E yield problems — a painful exclusion from what became one of the most lucrative hardware cycles in semiconductor history. Nvidia's H100 and H200 platforms were dominated by SK Hynix-supplied memory, and Samsung bore the financial and reputational consequences.

The turnaround required significant investment. Samsung reportedly expanded its 1c DRAM capacity to target 60,000 wafers per month specifically for HBM4 production — a commitment that diverts resources from consumer memory lines. Passing Nvidia's dual qualification at both 10 Gbps and 11 Gbps in early 2026 was the technical proof point that converted capacity investment into a supply contract.

Even so, Samsung's 30% share of Vera Rubin HBM4 reflects that it's a junior partner in this cycle. The company faces ongoing pressure to achieve yield parity with SK Hynix at the 11 Gbps tier before it can meaningfully close the gap. That technical deficit — not manufacturing capacity — is the binding constraint on Samsung's share of Nvidia's most premium memory spend.

SK Hynix's Grip on the AI Memory Market

SK Hynix enters the Vera Rubin cycle from a position of considerable strength. The company is expected to supply more than half of Nvidia's total HBM procurement in 2026 across both HBM3E and HBM4 products, according to analysts at Citi quoted in recent research notes. That position was built through early, deep investment in HBM3E — Samsung's stumbles gave SK Hynix the runway to establish manufacturing discipline and customer trust that is now compounding into HBM4 dominance.

The 70% Vera Rubin allocation, if it holds, positions SK Hynix as the single largest beneficiary of Nvidia's AI hardware ramp — a ramp that, given the $650 billion infrastructure spending cycle underway, represents the largest sustained semiconductor procurement event in history. Citi analysts, including five-star analyst Atif Malik, have projected that DRAM average selling prices will rise 171% year-over-year in 2026, with NAND prices climbing 127% as enterprise SSD demand accelerates alongside compute.

Jensen Huang's Message to the Memory Industry

The backdrop to this supply chain realignment is a strikingly public lobbying campaign from Nvidia CEO Jensen Huang directed at the DRAM manufacturing base. Huang has made a series of statements in recent months urging memory fabricators to expand capacity aggressively, offering an unusually direct buyer's guarantee: whatever DRAM fabs can produce, Nvidia will purchase.

The implicit message is that current HBM supply is not just tight — it is the primary factor limiting how fast the AI hardware ecosystem can scale. Compute is not the bottleneck. Power is not the bottleneck. Memory bandwidth and memory capacity, bound by how fast Samsung, SK Hynix, and Micron can manufacture and qualify HBM stacks, are the actual constraint on AI infrastructure buildout pace.

This dynamic explains why even Micron's exclusion from the Vera Rubin flagship tier is unlikely to be permanent. TrendForce's February analysis flagged the possibility of Nvidia eventually diversifying its HBM4 sourcing to all three suppliers as aggregate demand grows. Huang's public overtures to DRAM fabs function as an open invitation: qualify at scale, and Nvidia's supply chain has room for everyone.

A $650 Billion Year — and Why Memory Is the Chokepoint

Market research firm IDC has described the current memory shortage environment as a crisis like no other. Technology companies are preparing to spend approximately $650 billion on computing infrastructure in 2026 — roughly 80% higher than the previous year's record. Within that number, HBM procurement represents a disproportionate share of value: each HBM4 stack commands premium pricing, and every major AI accelerator platform ships with multiple stacks per unit.

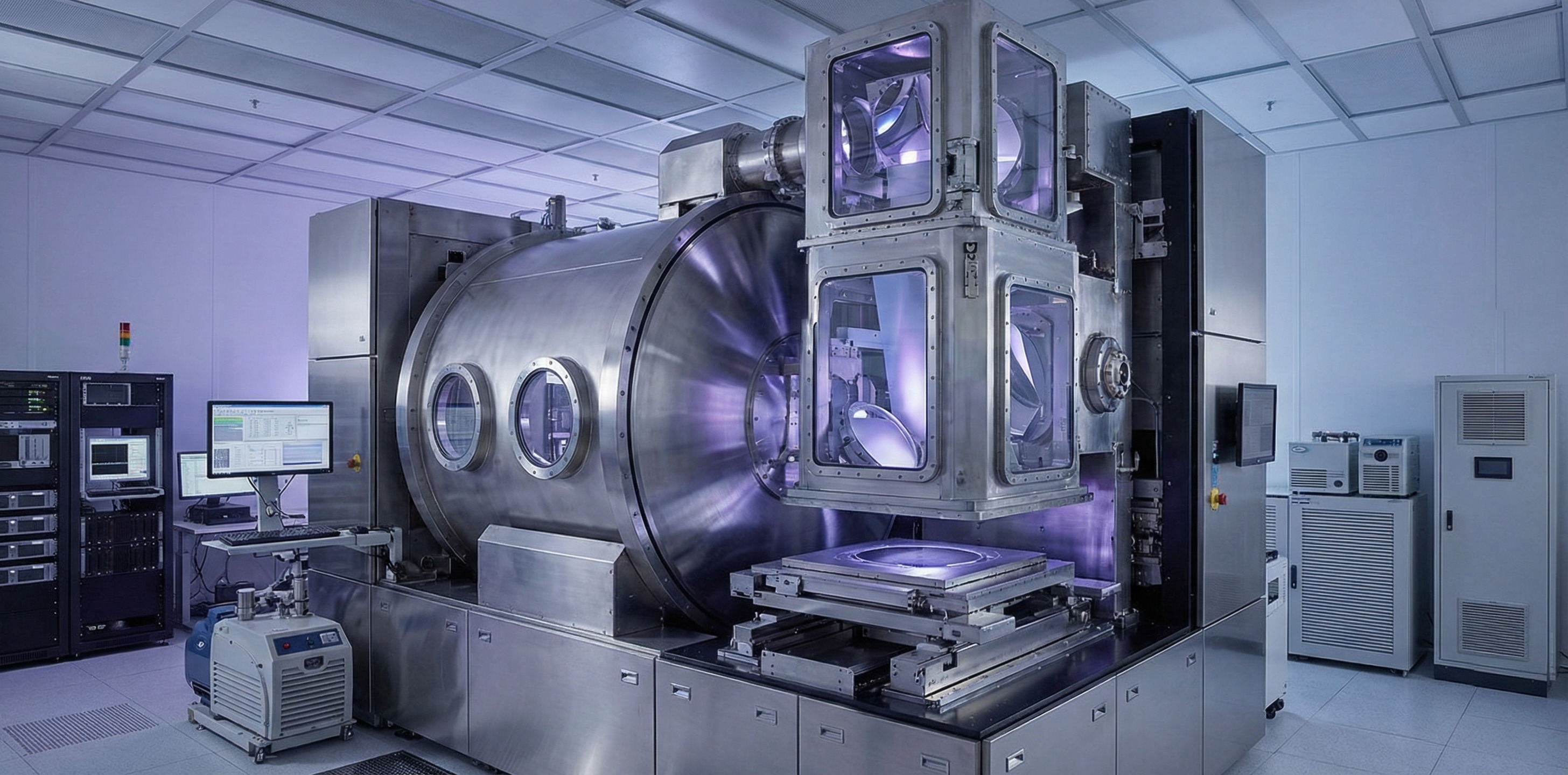

Compounding the demand signal is the packaging complexity involved in assembling modern AI chips. A separate but related supply crunch has emerged around T-glass — a specialized substrate material used in chip packaging interposers. Tom's Hardware reported this week that the primary producer, Japan's Nittobo, is tripling capacity at its Fukushima plant — but new output won't reach the market for several years. Each successive generation of AI accelerator requires larger interposers and more complex substrates, which means greater T-glass consumption per unit even before accounting for volume growth.

The result is a cascading set of supply constraints that no single action can resolve. More DRAM wafer capacity helps. Better T-glass availability helps. Qualifying additional HBM suppliers helps. But the pace of AI infrastructure investment has outrun the semiconductor supply chain's ability to respond in real time — and the Vera Rubin supplier confirmation is a direct expression of that pressure.

What to Watch at GTC on March 16

Nvidia's GTC developer conference, opening March 16, will be the formal stage for Vera Rubin's debut. The vendor confirmation published this week is the supply chain equivalent of a pre-briefing: it tells the market who has qualified, at what speed, and in what proportion — before the product itself has been officially announced to customers.

Key details to watch at GTC include the precise HBM4 configuration per Rubin SKU, production timeline commitments, and any signal about Micron's potential path back into the premium tier for later Vera Rubin revisions. Also significant will be any discussion of Rubin Ultra — reportedly slated for 2027 — which will push HBM specifications further and trigger another qualification cycle with potentially even higher performance requirements.

For the memory market, the more immediate question is whether SK Hynix's 11 Gbps product achieves its targeted full-scale production ramp this month, on schedule. Volume delivery of qualified HBM4 at 11 Gbps is the critical path item for Vera Rubin's H2 2026 commercial launch. Any slip in that timeline has direct consequences for when the first NVL72 racks begin shipping to hyperscaler customers — customers who, at $650 billion in aggregate 2026 infrastructure spend, cannot afford to wait.