Mira Murati's Thinking Machines Lab has struck a multiyear strategic partnership with NVIDIA that commits the startup to deploying at least one gigawatt of the chipmaker's forthcoming Vera Rubin systems — a scale of compute that industry executives peg at roughly $50 billion. The announcement, made Tuesday morning, positions one of Silicon Valley's most closely-watched AI startups as a major anchor customer for NVIDIA's next-generation architecture while deepening NVIDIA's now-familiar pattern of investing in the very companies that spend billions on its hardware.

What One Gigawatt of Vera Rubin Actually Means

A gigawatt is a measure of electrical power, not a chip count — and translating it into dollar figures requires context. According to senior industry executives who spoke with Reuters, one gigawatt of computing power is roughly enough to run 750,000 American homes, and at current AI hardware pricing, it carries a price tag in the vicinity of $50 billion. That puts Thinking Machines Lab's commitment in the same stratospheric range as the largest infrastructure deals in the industry's history.

For comparison, OpenAI allegedly signed a $300 billion compute deal with Oracle in 2025 — a deal so large it was initially met with skepticism. The AMD-Meta partnership announced earlier this year committed to six gigawatts of custom Instinct MI450 GPUs. Against that backdrop, a single gigawatt from a startup that is still in its early phases of model development is a staggering bet.

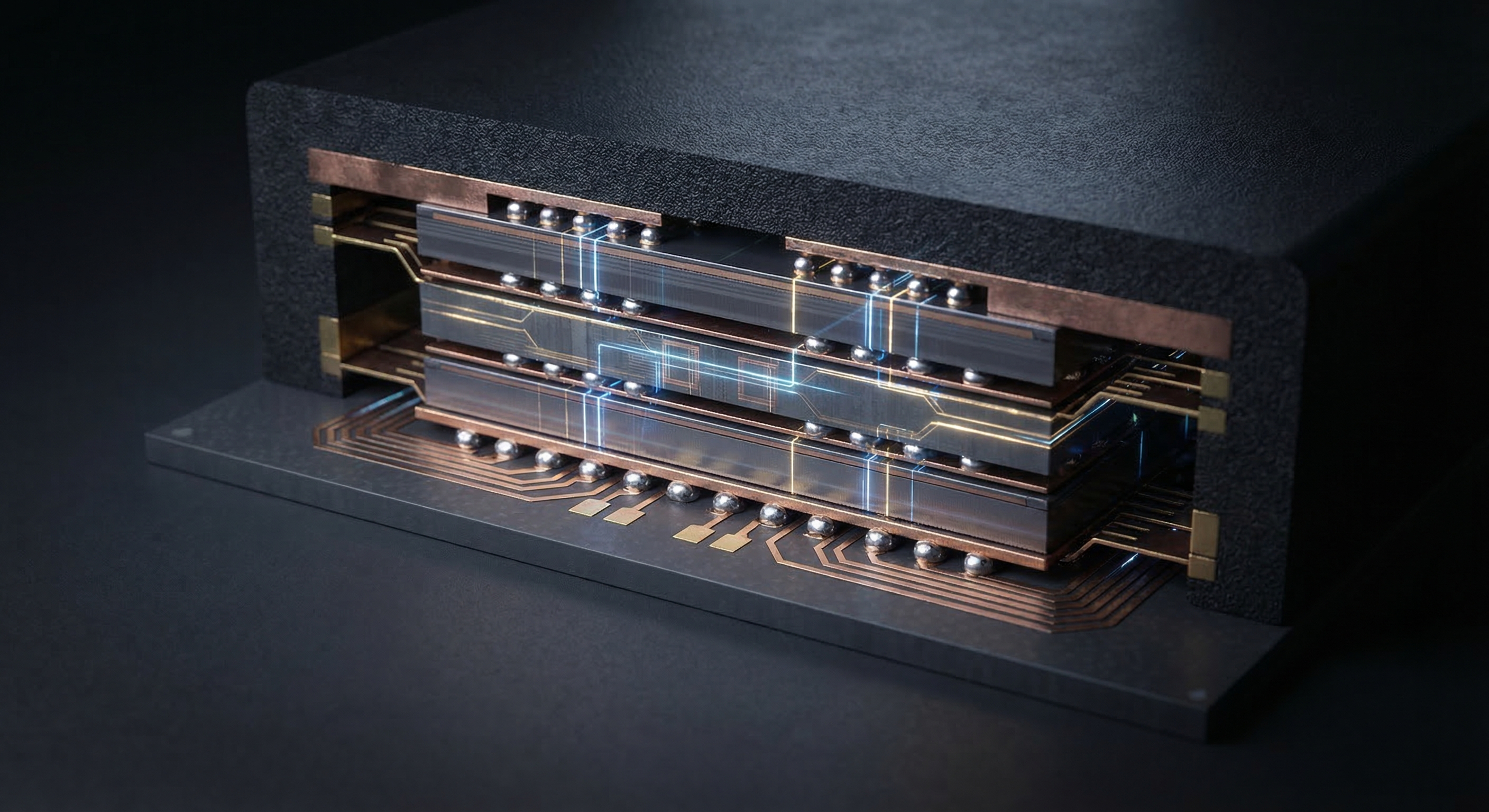

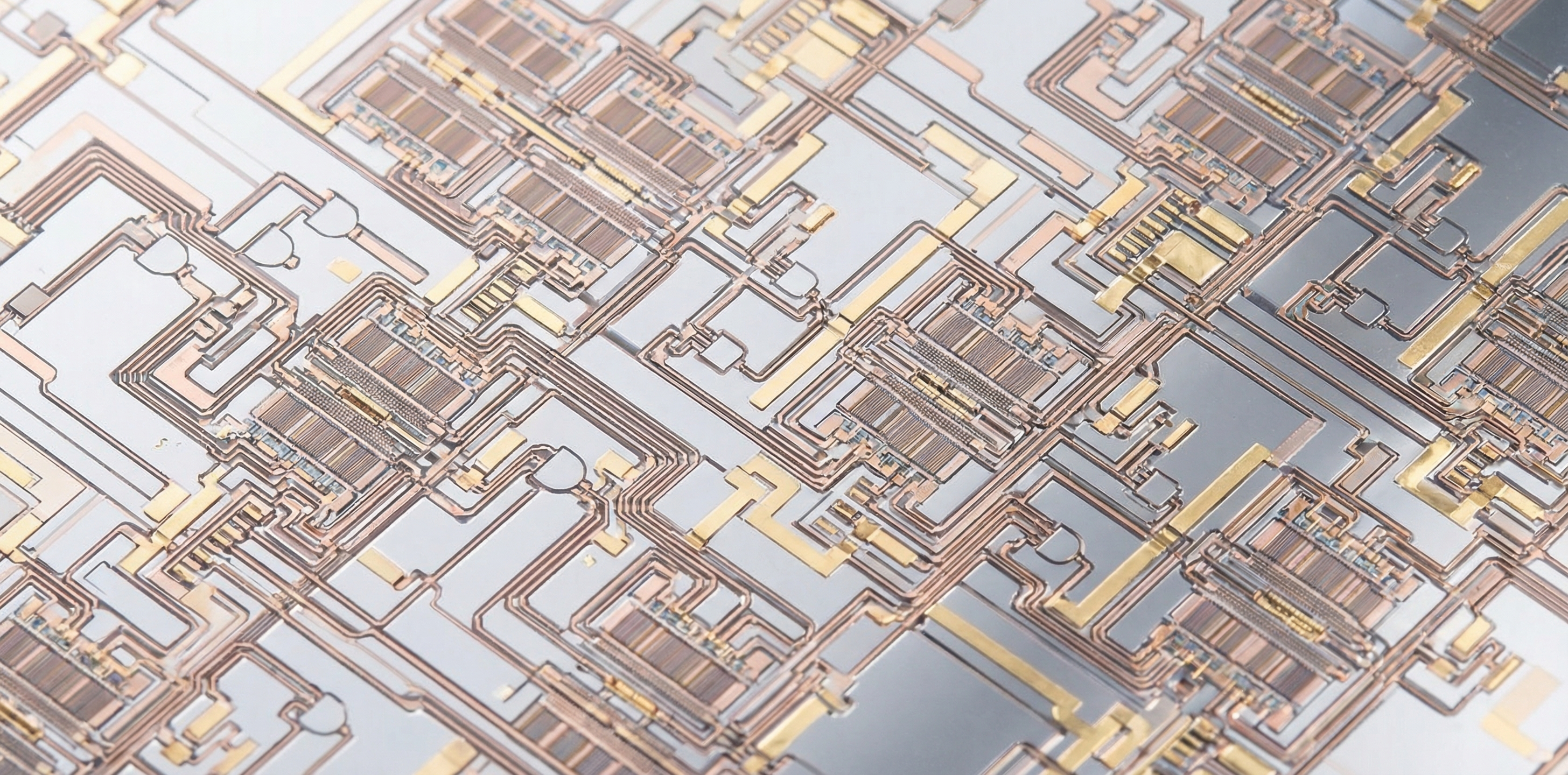

The specific hardware in question is NVIDIA's Vera Rubin platform — the chipmaker's most advanced AI system architecture, which debuted at CES in January and is expected to begin shipping to customers in the second half of 2026. Deployment for Thinking Machines Lab is targeted for early 2027 under the terms of the agreement. The partnership also includes a commitment to develop training and serving systems optimized for NVIDIA architectures — a technical collaboration element that goes beyond a standard procurement agreement.

Why such an enormous compute commitment from a relatively young startup? Frontier AI model training is an arms race measured in raw compute. Training runs for the most capable models now consume tens of thousands of GPUs running continuously for months. A gigawatt of Vera Rubin capacity would give Thinking Machines Lab the computational firepower to train models at a scale that rivals OpenAI and Anthropic — two companies that collectively received more than $40 billion in NVIDIA's investment capital over the past year.

NVIDIA's Circular Investment Engine

This deal adds another loop to what some industry analysts are calling NVIDIA's circular capital strategy. The pattern is becoming well-established: NVIDIA invests in a frontier AI startup, the startup uses capital to buy NVIDIA chips, NVIDIA books the revenue, and the startup's rising valuation makes the investment look brilliant.

NVIDIA made a $30 billion investment in OpenAI in February as part of a massive funding round. It committed $10 billion to Anthropic in 2025. Now it has made a "significant investment" — exact amount undisclosed — in Thinking Machines Lab. In each case, NVIDIA is not just a passive financial backer; it is the company supplying the hardware those startups will spend their funding on.

The circularity has generated genuine concern in corners of the analyst community. Some observers have drawn comparisons to the late 1990s tech bubble, when vendor financing arrangements obscured true demand signals and allowed companies to report revenue from deals they themselves were subsidizing. NVIDIA's defenders argue the comparison breaks down because the underlying demand for AI compute is real and growing — not speculative — and that Jensen Huang's prediction of $3 trillion to $4 trillion in AI infrastructure spending by decade's end appears credible given current trajectories.

What is undeniable is that NVIDIA has developed a potent model: supply the picks and shovels, invest in the miners, and collect on both ends. Every gigawatt committed by a startup like Thinking Machines Lab is a gigawatt that NVIDIA will manufacture, sell, and integrate — and then indirectly finance through the equity stake it holds in the buyer.

Who Is Thinking Machines Lab, and What Is It Building?

Thinking Machines Lab was founded by Mira Murati in early 2025, shortly after she departed OpenAI — the company where she served as Chief Technology Officer and briefly as interim CEO during Sam Altman's notorious four-day board crisis in November 2023. Since leaving OpenAI in September 2024, Murati had maintained a low public profile before assembling a team of prominent AI researchers and launching the startup.

The company's stated mission is to build AI systems that are "more widely understood, customizable and generally capable" — a differentiated positioning from the raw capability race dominating most frontier AI labs. Its first public product, Tinker, launched in October 2025 as an API allowing researchers and developers to fine-tune AI models. The product points toward an enterprise and research-institution market rather than direct consumer AI — a segment Murati has signaled she believes is underserved by current offerings.

In July 2025, Thinking Machines Lab closed a $2 billion seed round led by Andreessen Horowitz, with Accel, NVIDIA, and even AMD's venture arm among the investors — an unusual arrangement given AMD and NVIDIA's direct chip competition. That round valued the company at $12 billion, an extraordinary number for a company with a single API product and no publicly disclosed model benchmarks. A new funding round seeking a valuation in the "tens of billions" was reportedly in process before this NVIDIA deal was announced.

The Co-Founder Exodus and What It Means

Despite its high-profile backing and mission, Thinking Machines Lab has seen significant attrition among its founding team — a pattern that deserves scrutiny when evaluating the company's long-term trajectory.

Co-founder Andrew Tulloch departed for Meta in October 2025. Early in 2026, three additional co-founders — Barret Zoph, Luke Metz, and Sam Schoenholz — all returned to OpenAI. That includes Zoph, who served as CTO, and Metz, both of whom had been core technical architects of the lab's research direction. The departures underscore the relentless war for AI talent that makes retaining a team long enough to execute on a multi-year hardware commitment genuinely difficult.

Thinking Machines Lab declined to comment to TechCrunch beyond its official press release when asked about the co-founder situation and deal specifics. Murati's own statement in the announcement — "NVIDIA's technology is the foundation on which the entire field is built" — was characteristically measured, emphasizing partnership and mission over competitive posturing. Jensen Huang was more effusive: "Thinking Machines has brought together a world-class team to advance the frontier of AI."

Whether the remaining team constitutes a "world-class" research organization capable of justifying a gigawatt of frontier compute remains an open question — and one that will take years to answer definitively.

The New Normal: Mega-Scale Compute Commitments

Thinking Machines Lab's deal is the latest entry in an accelerating series of infrastructure commitments that are reshaping how AI companies think about competitive moats. Compute access — or more precisely, the ability to commit to compute access years in advance — has emerged as a primary proxy for credibility in the frontier AI space.

The sequence of mega-deals over the past twelve months makes the pattern clear. OpenAI's alleged $300 billion Oracle deal. AMD and Meta's 6 gigawatt custom GPU alliance. Microsoft's staggered multi-year Azure AI infrastructure commitments. And now NVIDIA investing directly in Thinking Machines Lab while locking in a gigawatt of future Vera Rubin sales. Each deal raises the floor for what serious frontier AI development is assumed to require.

The implications extend well beyond the companies directly involved. Every gigawatt committed to a handful of frontier labs is compute that will not be available to academic research institutions, mid-sized enterprises, or smaller AI startups — at least not at comparable prices or timelines. The concentration of AI infrastructure at the top of the market is becoming a structural feature, not a temporary imbalance. Washington's export control regime, which now gates AI chip sales at the government level, adds an additional layer of scarcity management to a market already straining against supply.

Meanwhile, NVIDIA's Vera Rubin supply chain is under its own pressure. As TTN reported earlier this week, Samsung and SK Hynix have locked up the HBM4 memory slots for Vera Rubin, cutting Micron from the flagship cycle entirely. That memory bottleneck could constrain the physical delivery timeline for Thinking Machines Lab's gigawatt commitment even as the ink dries on the announcement.

What to Watch

Several near-term developments will determine whether this deal represents a genuine inflection point for Thinking Machines Lab or a high-profile commitment that ultimately outruns the company's ability to execute.

Funding round close. Thinking Machines was reportedly seeking a new funding round valuing it at "tens of billions" before this deal. The NVIDIA investment — and the implied credibility of a gigawatt commitment — should accelerate that process. Watch for a formal announcement of the round's close and the disclosed valuation, which will signal how outside investors assess the company's trajectory post-key departures.

Vera Rubin delivery timeline. NVIDIA expects Vera Rubin to begin shipping in the second half of 2026. Any manufacturing slippage — whether from HBM4 supply constraints, TSMC capacity, or packaging delays — would push Thinking Machines' deployment further into 2027 or beyond. The gap between a headline gigawatt commitment and actual operational compute could be significant.

Model release. The fundamental question remains: what will Thinking Machines Lab's actual AI models look like, and will they deliver on the differentiated "customizable AI" thesis? Tinker is a fine-tuning API, not a frontier model. For the gigawatt commitment to be justified, the company will need to demonstrate research and product capability that earns the kind of enterprise and institutional adoption that its compute scale implies.

For now, the deal cements two things. First: Mira Murati has secured the infrastructure foundation to compete at the frontier, regardless of the internal turbulence her lab has weathered. Second: NVIDIA's position as the indispensable, invested partner of virtually every major AI frontier effort is now essentially complete. The chipmaker doesn't just power the AI industry — it funds it, and it profits twice.