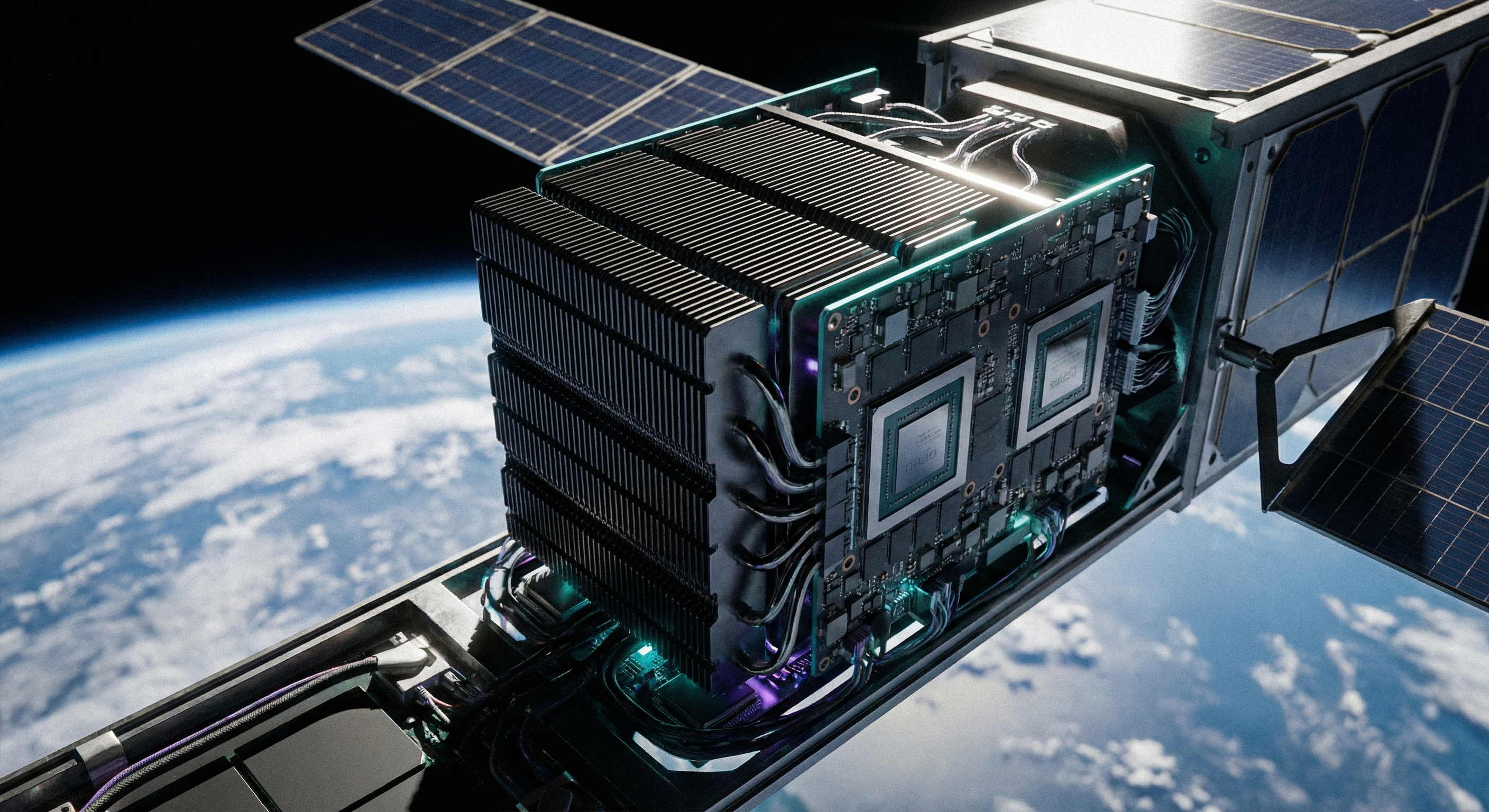

At GTC 2026, Jensen Huang made a statement that would have sounded absurd five years ago: NVIDIA is going to space. Not as a metaphor. Not as a branding exercise. As an actual product line designed to put AI data center compute into orbit. The NVIDIA Space-1 Vera Rubin Module — announced March 17 alongside the full Vera Rubin platform — delivers 25 times more AI compute than the H100 GPU in a form factor engineered for the size, weight, and power constraints of orbital deployment. The data center, for the first time in its history, is leaving the ground.

What the Space-1 Vera Rubin Module Actually Is

The Space-1 is not a consumer product or a research prototype. It is a production-grade AI accelerator specifically engineered for orbital environments — what NVIDIA calls space computing. At its core, the module runs the same Rubin GPU architecture that powers NVIDIA's terrestrial data center platforms, adapted for the extreme constraints of operating in space: radiation tolerance, thermal management without conventional cooling systems, power efficiency under solar-only budgets, and physical compactness measured in kilograms, not rack units.

The headline performance number is significant. Compared to the NVIDIA H100 GPU — the workhorse of today's AI data centers — the Rubin GPU inside Space-1 delivers up to 25x more AI compute for space-based inferencing. For operators designing satellite constellations or orbital data center platforms, that means LLMs, foundation models, and advanced geospatial AI can run directly on-orbit rather than being pushed back down to ground stations for processing.

The Three-Layer Platform Stack

NVIDIA's space computing announcement is not a single product — it is a layered platform designed to handle different classes of orbital workloads at different points in the mission stack.

At the top is the Space-1 Vera Rubin Module, purpose-built for orbital data centers (ODCs) that need hyperscale-class inference. Starcloud, one of NVIDIA's launch partners, describes its mission explicitly: "building purpose-designed orbital data centers to deliver cloud and AI infrastructure directly in space." Its CEO Philip Johnston put it plainly: "With NVIDIA, we can bring true hyperscale-class AI computing to orbit — processing data at the source, reducing downlink dependency and enabling customers to run training and inference workloads in space for the first time."

The second tier is the NVIDIA IGX Thor platform, providing industrial-grade durability and enterprise software support in a power-efficient package. IGX Thor supports real-time AI processing, functional safety, secure boot, and autonomous operation — capabilities that are essential for spacecraft that need to make decisions without waiting for ground control authorization. T-Mobile and other telecom operators are already deploying IGX Thor-class hardware at terrestrial base stations as AI-RAN platforms evolve; the orbital use case extends the same logic to satellite nodes.

The third tier is NVIDIA Jetson Orin, the ultra-compact, energy-efficient module optimized for satellites and edge spacecraft where size, weight, and power constraints are absolute. Kepler Communications — which is building a next-generation space data network — is using Jetson Orin to bring AI directly to its satellites. CEO Mina Mitry explained: "NVIDIA Jetson Orin brings advanced AI directly to our satellites, allowing us to intelligently manage and route data across our constellation and turning our network into a smarter, more efficient platform."

On the ground side, NVIDIA's RTX PRO 6000 Blackwell Server Edition GPU provides the high-throughput processing layer for geospatial intelligence workloads at ground stations. NVIDIA claims it delivers up to 100x faster performance versus legacy CPU-based batch systems when analyzing massive imagery archives — a critical capability for operators like Planet Labs that image the entire Earth daily and need to turn raw satellite data into actionable intelligence at speed.

The Partner Ecosystem Building Orbital Infrastructure

NVIDIA's space computing announcement is notable not just for the hardware, but for the breadth of commercial operators that have already committed to the platform. Six companies are publicly building on NVIDIA's orbital stack:

Aetherflux is pioneering solar-powered orbital compute — combining the Space-1 Vera Rubin Module with space-based solar energy to run AI at the edge in orbit autonomously. CEO Baiju Bhatt framed it as a new paradigm for power and compute: "NVIDIA Space-1 Vera Rubin Module delivers high-performance, energy-efficient AI at the edge in orbit, powered by solar energy."

Axiom Space, which operates the world's first private space station modules attached to the ISS, is integrating NVIDIA platforms for its commercial orbital infrastructure. Sophia Space is building modular, passively cooled, hosted computing platforms — essentially orbital co-location facilities — using Jetson Orin to enable customers to run applications directly in space without managing their own satellite infrastructure.

Planet Labs PBC represents perhaps the most immediately commercial application. Planet images the entire Earth every single day, generating a data challenge that requires the world's most advanced computing. By integrating NVIDIA's accelerated platform from space to ground, Planet is moving from raw pixels to actionable insights in near real time using NVIDIA CorrDiff AI models. CEO Will Marshall described this as "a revolutionary leap in planetary intelligence, helping humanity make smarter decisions at the speed of global change."

Why Orbital Compute Changes the Data Center Economics

The conventional data center model relies on a fundamental assumption: data travels to compute. Sensors and satellites generate data, compress it, beam it to ground stations, process it, and route the results back. Every step in that chain adds latency, consumes bandwidth, and creates bottlenecks. For Earth observation, autonomous navigation, and intelligence applications, those delays are not just inconvenient — they can be operationally disqualifying.

Orbital compute flips that assumption. If an AI accelerator is running directly on the satellite, raw sensor data can be analyzed before it is ever transmitted. Only the conclusions — not the petabytes of raw imagery — need to be downlinked. That is not just an efficiency gain; it is a structural shift in how space-based data infrastructure operates. Bandwidth constraints that would make real-time AI analysis impossible from the ground become manageable when inference runs at the source.

For the data center industry, the significance is strategic. Orbital data centers represent a new category of infrastructure that does not compete with terrestrial hyperscalers — it extends the compute stack into an environment terrestrial operators cannot reach. Companies like Starcloud are explicitly positioning orbital compute as a seamless extension of the global cloud, not a replacement for it.

The Real Constraints of Computing in Space

NVIDIA's announcement is a genuine technical milestone, but the challenges ahead are significant and should not be understated.

Power remains the binding constraint. On Earth, a Vera Rubin GB300 NVL72 cluster consumes roughly 600 kilowatts. A satellite's solar array generates kilowatts, not megawatts. Space-1 is engineered for SWaP (size, weight, power) constraints, but the fundamental tradeoff between compute density and orbital power budgets will limit the scale of workloads that can be run in orbit for the foreseeable future. Aetherflux's solar-powered orbital compute pitch is directionally correct but depends on significant advances in space-based solar energy before it can rival terrestrial scale.

Thermal management is fundamentally different. Terrestrial data centers rely on air cooling, liquid cooling, or increasingly direct liquid immersion to dissipate heat. In orbit, convective cooling is impossible. Heat must be radiated — a significantly less efficient mechanism that limits sustained compute density. Sophia Space's passive cooling approach is one response to this; scaling it to Space-1-class workloads is an engineering problem without an obvious near-term solution.

Radiation hardening adds cost and complexity. Commercial semiconductor processes optimized for terrestrial performance are not designed for the ionizing radiation environment of low Earth orbit. NVIDIA has not disclosed the radiation tolerance specifications for Space-1, but adapting Rubin GPU silicon for orbital operation involves design tradeoffs that do not exist in datacenter products.

Launch economics dictate everything. SpaceX's Falcon 9 brings costs down to roughly $2,700 per kilogram to LEO — dramatically lower than a decade ago, but still a substantial premium over terrestrial rack space. For Starcloud and Sophia Space's orbital co-location pitch to be commercially viable, they need both launch cost reductions and sufficient revenue per kilogram of deployed compute to justify the premium over equivalent terrestrial capacity.

Jensen Huang's Framing and What It Signals

Huang's GTC announcement was deliberate in its ambition. "Space computing, the final frontier, has arrived. As we deploy satellite constellations and explore deeper into space, intelligence must live wherever data is generated," he said at the keynote. The phrase "intelligence must live wherever data is generated" is a mission statement for a new infrastructure paradigm — one where compute is not centralized but distributed to every point where sensors touch the physical world, including orbit.

For NVIDIA, the strategic logic extends the same playbook that made the company dominant in terrestrial AI: own the silicon at every level of the stack. If autonomous vehicles need Jetson for edge AI, and data centers need Blackwell for training, then orbital platforms need Space-1 for whatever comes next. The addressable market is smaller than terrestrial hyperscalers, but NVIDIA's margins in specialized, high-performance compute are not driven by volume — they are driven by having no viable alternative.

What Data Center Operators Should Watch

For operators managing terrestrial AI infrastructure, the immediate implications of Space-1 are limited. The near-term customer base for orbital compute is a specialized set of Earth observation, communications, and space logistics companies — not hyperscalers deciding between Azure regions and orbital deployment.

But the medium-term picture is more interesting. As geospatial intelligence workloads grow — driven by government contracts, climate monitoring, agricultural analytics, and precision logistics — the demand for real-time Earth observation AI will scale. That demand will, in turn, drive investment in the orbital compute infrastructure to serve it. The operators building that infrastructure today — Starcloud, Sophia Space, Kepler, Axiom — are staking early claims on an infrastructure category that does not yet have an established market structure.

The DSX AI Factory reference design announced alongside Space-1 at GTC is also relevant context. NVIDIA is simultaneously publishing blueprints for building terrestrial AI factories more efficiently while extending its hardware platform to orbit. The message to the data center industry is clear: the definition of "infrastructure" is expanding, and NVIDIA intends to supply the silicon for every version of it.

Whether orbital data centers scale to commercial relevance within the next five years depends on variables — launch costs, power technology, regulatory frameworks for space-based compute — that are largely outside NVIDIA's control. But Space-1 is now a shipping product, not a roadmap item. The infrastructure for compute in orbit exists. The question is whether the economics will follow.