For three decades, Arm Holdings has been one of the most consequential companies in technology without ever making a single chip. On Tuesday, that changed. At its Arm Everywhere event in San Francisco, the British semiconductor designer unveiled the AGI CPU — its first proprietary, production-ready silicon — a 136-core server processor built on TSMC's 3nm process and aimed squarely at the agentic AI workloads reshaping modern data centers. The customer list alone signals how serious this is: Meta, OpenAI, Cloudflare, Cerebras, SAP, F5, SK Telecom, and Rebellions are already on board.

A Business Model Transformed

Arm's entire history has been built on a deceptively elegant model: design world-class processor architectures, license that intellectual property to manufacturers, and collect royalties as the chip industry does the manufacturing heavy lifting. The company's Arm Everywhere keynote today represented the most significant strategic pivot in its 35-year history — from IP licensor to silicon vendor.

"We think that the CPU is going to be fundamental to ultimately achieving AGI," Mohamed Awad, Arm's Executive Vice President of Cloud AI, told reporters at the event. That framing — attaching the AGI label to a CPU rather than a large language model — is deliberate and revealing. Where most of the industry has focused on GPU horsepower as the path to artificial general intelligence, Arm is making a different argument: the bottleneck isn't model capacity, it's orchestration.

The AGI CPU is described by Arm as "the first production silicon from Arm, designed for AI infrastructure at scale" — a processor built not to run AI models itself, but to manage the growing armies of AI agents that coordinate, reason, and execute tasks across cloud infrastructure. In Arm's framing, the CPU is the conductor; the GPU is still the orchestra.

136 Cores, Clean-Sheet Design

The technical specifications behind the AGI CPU are the result of some deliberate departures from conventional server CPU design philosophy.

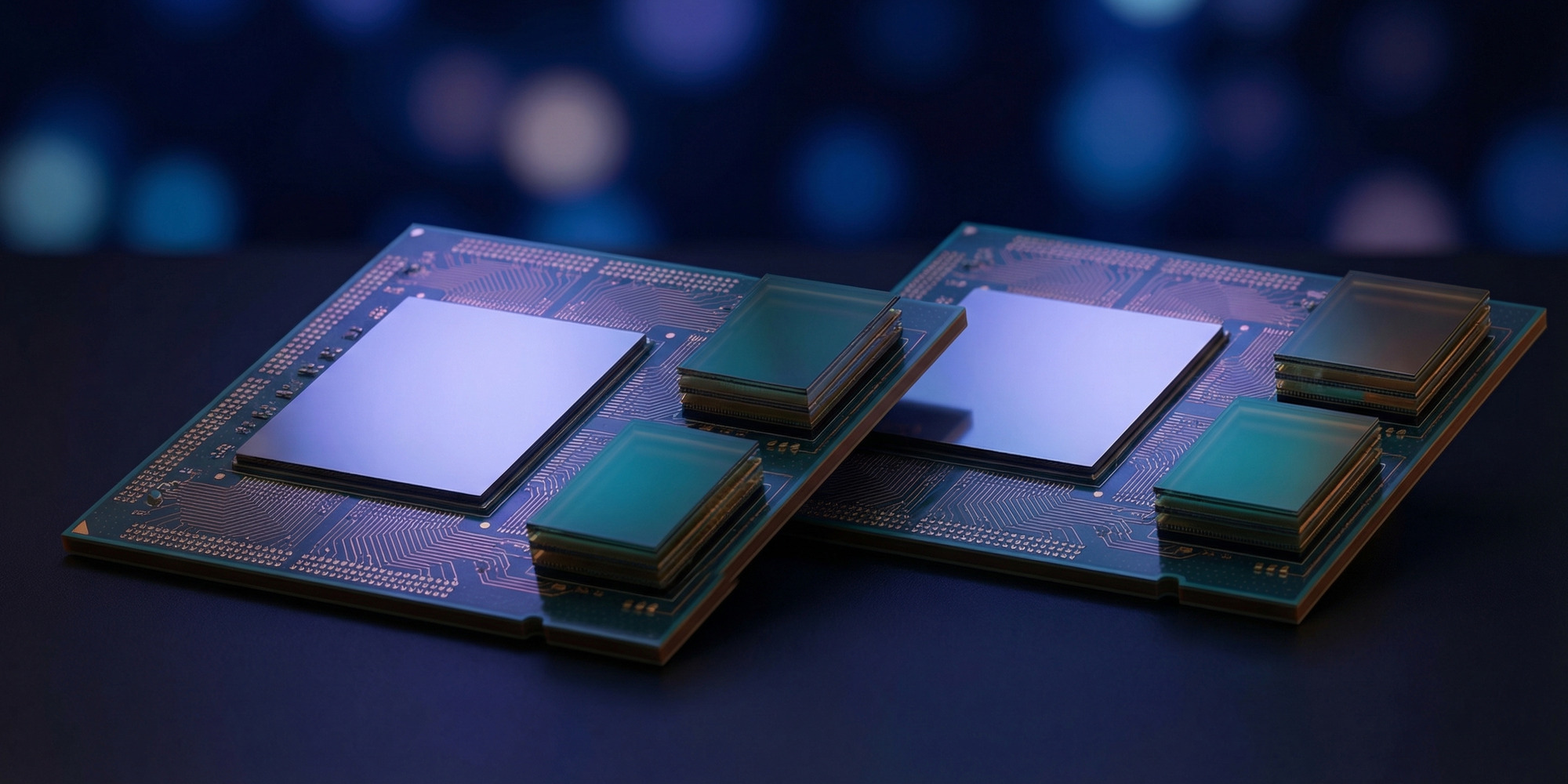

At its core, the chip is a 300-watt, 136-core part fabbed on TSMC's 3nm process, spread across two dies. Each core is based on Arm's Neoverse V3 architecture — the same foundation underlying Nvidia's Grace CPUs and Amazon's Graviton4 — clocked at up to 3.7 GHz (3.2 GHz base). Per-core, each gets 2 MB of dedicated L2 cache, complemented by a 128 MB shared system-level cache (SLC) that helps reduce main memory pressure for AI agent workloads that frequently reuse the same context windows and code sequences.

One of the more counterintuitive design decisions: Arm chose to forgo simultaneous multithreading (SMT) entirely. One thread per core. Awad explained the logic — deterministic, single-threaded performance scaling matters more for agentic systems than raw throughput, since these workloads demand predictable latency rather than maximum parallelism. "The way that legacy CPUs had been built worried about things like support for legacy applications," Awad said. "We specifically didn't want to add things that weren't going to be 100 percent utilized in the mission of this device. This is a clean sheet design meant to address all that."

For memory, the chip runs 12 channels of DDR5 at speeds up to 8800 MT/s, delivering 825 GB/s of aggregate bandwidth — roughly 6 GB/s per core. Memory and I/O subsystems are co-integrated into the same die as the compute, rather than separated into a separate I/O die, to minimize latency. The trade-off: each socket presents as two separate NUMA domains to the operating system, a wrinkle that software architects will need to account for but that Arm argues is worth the latency win.

Connectivity is equally future-focused: 96 lanes of PCIe 6.0 and support for CXL 3.0, enabling direct memory pooling across CPU and accelerator resources — increasingly important as the industry moves toward disaggregated memory architectures for large-scale AI deployments.

Density That Rivals and Surpasses Nvidia

Where the AGI CPU's story becomes most compelling is at the rack level. Arm has validated two distinct rack configurations optimized for different deployment scenarios.

The first is an air-cooled 36 kW rack containing 30 compute blades, yielding 8,160 CPU cores per rack. This is a conventional-density option for operators not yet ready to invest in liquid cooling infrastructure — but it's far from the headline spec.

The second configuration is a liquid-cooled 200 kW rack housing 42 eight-node servers, reaching an extraordinary 45,696 cores per rack. For context, Nvidia's Vera ETL256 CPU rack — itself announced just last week at GTC 2026 — tops out at 22,528 cores. Arm's liquid-cooled configuration packs more than twice the core count into a comparable footprint.

This density advantage is central to Arm's pitch. Agentic AI systems — frameworks that spin up hundreds or thousands of individual agent processes to complete complex, multi-step tasks — are fundamentally CPU-hungry in ways that distinguish them from GPU-centric model training or inference. Each agent is its own thread of execution, with its own memory state, its own I/O interactions, and its own scheduling demands. Delivering 45,000 cores in a single rack changes the economics of running these workloads at hyperscale.

The Customer Roster and Its Implications

Arm's decision to announce with a dense roster of committed customers is a calculated move designed to preempt any questions about market readiness. Meta is the flagship name — the social media giant is already a major Arm consumer through Nvidia's Grace CPUs and has plans for Vera deployment, and is now adding Arm's own silicon to that mix. Meta's involvement signals that the chip isn't experimental: it's being validated for production-scale deployment later this year.

OpenAI's inclusion is perhaps more surprising. OpenAI has historically been an Nvidia-first shop at the infrastructure layer, but the company's rapidly expanding agentic AI portfolio — including its operator-class AI agents — creates exactly the kind of CPU demand Arm is targeting. Cerebras, which builds some of the largest custom AI accelerators in the world, is a logical customer as well: its accelerators need powerful host CPUs for management and orchestration, and Arm's density pitch is directly relevant.

The Cloudflare and F5 signings are telling for different reasons — both are infrastructure and networking companies whose AI-adjacent workloads (intelligent routing, bot mitigation, API management) are increasingly agent-driven but don't require GPU horsepower. For them, the AGI CPU is simply a better general-purpose server processor. SK Telecom and Rebellions round out a roster that spans cloud, telco, and custom silicon customers — a deliberate signal that this chip is designed for diverse deployment rather than a single vertical.

On the OEM side, Lenovo is already building 19-inch rack systems around the chip, suggesting general availability through standard enterprise channels rather than requiring customers to buy directly from Arm. That's a crucial distribution detail for broad adoption.

The Competitive Landscape — and the Tension with Licensees

Arm entering the silicon market creates an uncomfortable dynamic with its own licensing ecosystem. Companies like Ampere Computing — currently the primary independent Arm server CPU vendor — suddenly have Arm as a direct competitor. Qualcomm, which has built a data center CPU strategy around Arm's Neoverse cores, faces the same tension. Even Nvidia, which licenses Arm IP for its Grace and Vera CPUs, is now competing with the very company whose architecture underpins those products.

Arm's response to this tension will be watched closely. The company has historically been scrupulous about maintaining neutrality with its licensees — it's the source of its market power. Building its own chip that competes directly tests that neutrality in ways that could reshape licensing relationships, royalty negotiations, and partnership dynamics across the industry.

Against Intel and AMD, the comparison is more straightforward. Arm claims the AGI CPU delivers double the performance per watt of comparable x86 processors for agentic workloads — a claim it hasn't fully substantiated with third-party benchmarks, but one consistent with the efficiency advantages that have driven Arm's rise in hyperscale data centers through Graviton, Grace, and Ampere deployments. Intel's Xeon and AMD's EPYC are still the volume leaders in enterprise servers, but the efficiency gap has been narrowing every year.

The Bet on Agentic AI

Arm is making a specific, falsifiable prediction: that agentic AI systems will drive a four-fold increase in CPU demand in the data center. That thesis is already being stress-tested in real infrastructure. The explosion of AI coding agents, autonomous research agents, and multi-agent orchestration frameworks has driven measurable growth in CPU provisioning at hyperscalers over the past 18 months — not because models are getting more CPU-intensive, but because the scaffolding around those models is.

Each reasoning step in a complex agentic task is a separate execution context: spawning a subprocess, calling an API, evaluating a result, deciding a next step. The patterns look less like traditional HPC workloads and more like highly parallel, latency-sensitive distributed systems. GPUs can't help here; what these workloads need is exactly what the AGI CPU is designed to deliver — massive core counts, high per-core memory bandwidth, and predictable single-threaded performance.

Whether the AGI CPU's branding will age well — whether actual AGI will arrive before the chip's commercial lifespan ends, and what it will demand computationally if it does — is a question worth watching. What's not in question is that Arm has staked its first silicon product on a vision of AI infrastructure that is already, right now, consuming more CPU than the industry anticipated. That might be the most credible bet Arm could have made.

The chip is expected to reach general availability later in 2026.