While the industry debates GPU shortages and power grid capacity, a quieter supply chain crisis has been tightening its grip on AI data center buildouts: batteries. Panasonic — one of the world's largest battery manufacturers — outlined plans this week to triple its lithium-ion cell production capacity for data center applications, targeting ¥800 billion (~$5 billion) in annual battery sales by fiscal year 2029 — nearly quadrupling its current output. The kicker: customers have already committed to purchasing roughly 80 percent of everything Panasonic needs to sell to hit that target. The battery supply chain, in other words, is already spoken for — and AI infrastructure buyers who haven't locked in relationships with major suppliers are at risk of being left out.

The Component Nobody Talks About

The data center world has a well-documented obsession with three bottlenecks: GPUs, power interconnections, and cooling systems. Those constraints are real and have generated enormous coverage — including in our own reporting on OpenAI's fusion energy gamble, the $690B hyperscaler sprint hitting the grid wall, and the HBM and DRAM supply squeeze running through 2028. But the battery shortage sits largely outside that conversation, even though it affects every single data center project regardless of tier, geography, or purpose.

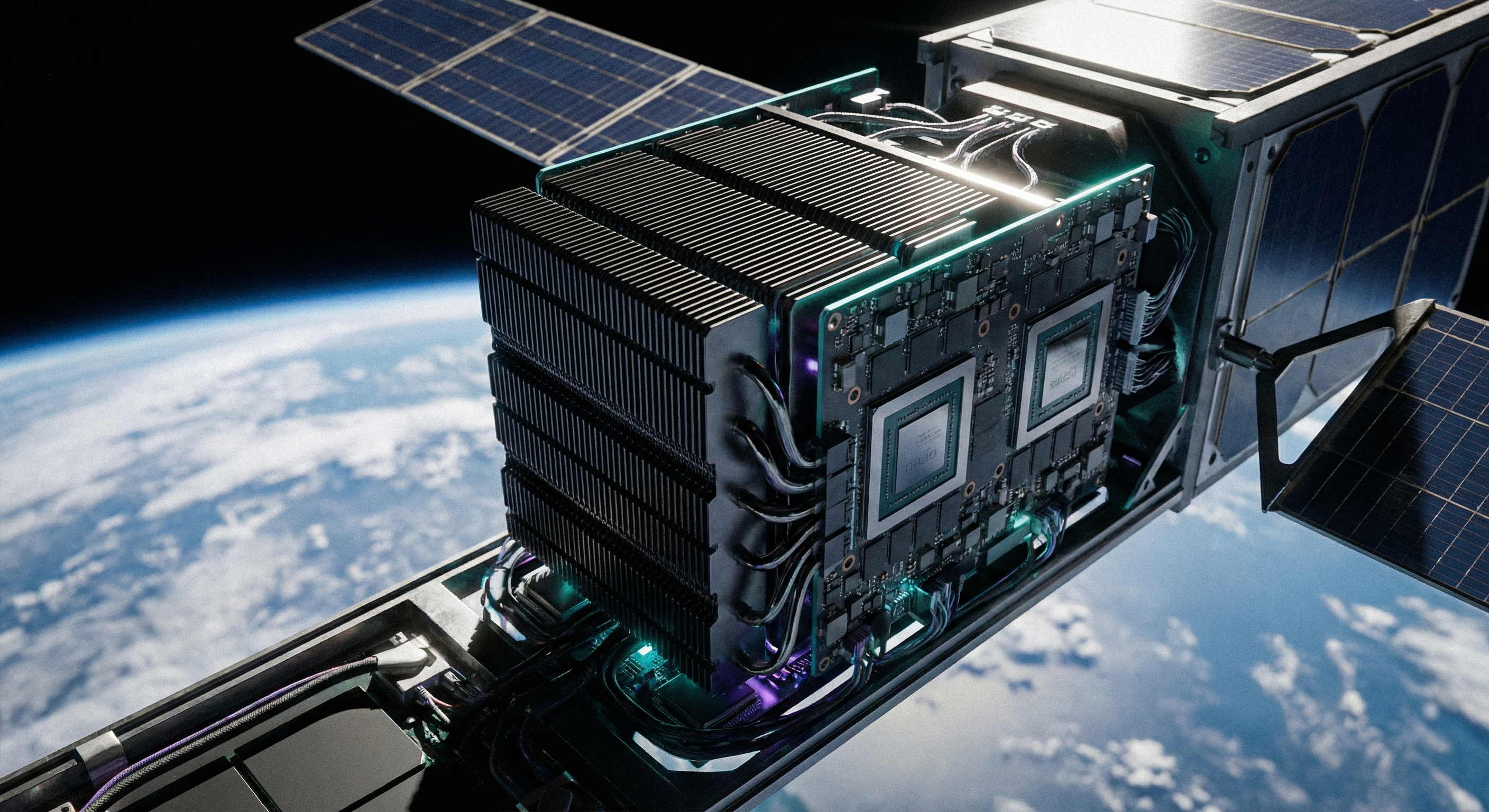

Every data center — from a 200-kilowatt colocation cage to a 500-megawatt hyperscale AI campus — requires uninterruptible power supply (UPS) systems to bridge the gap between utility outages and generator startup. Panasonic's systems sit in rack-mounted enclosures directly alongside servers and compute infrastructure, keeping them operational for the critical minutes needed to either restore utility power or complete a clean workload shutdown. That's a narrow but non-negotiable function: no UPS battery, no data center operation.

That demand was already expanding before the AI boom arrived. The entry of AI-optimized hardware has made it explosive. Nvidia's Blackwell GB200 NVL72 rack configuration operates at up to 120 kilowatts per rack. A 40-rack Vera Rubin POD — the next-generation AI factory architecture Nvidia launched this year — operates at aggregate power densities that would have seemed implausible for a mid-tier data center just three years ago. The UPS infrastructure required to back that kind of load is not the same equipment that powered a row of 10kW server racks. It's bigger, more sophisticated, and sourced from a supply chain that was nowhere near ready for what AI would demand of it.

Panasonic's Pivot: From Automotive to Compute

Panasonic's announcement this week offers the clearest public evidence yet of how dramatically the battery supply chain is reorienting around AI. The company outlined plans to triple production capacity for lithium-ion cells at its Japanese factories, with two levers: expanding existing dedicated facilities, and adapting some of its automotive manufacturing lines to make data center batteries instead. It is also evaluating whether to retool its Kansas plant — previously focused on EV battery supply — to serve datacenter demand.

The target is ambitious: ¥800 billion (approximately $5 billion) in battery revenue during fiscal year 2029, up from roughly ¥200 billion today. Reaching that number would require Panasonic to scale its data center battery business by a factor of four in under four years. The company says it aims for 80 percent market share in the data center battery segment to get there.

But the most significant data point is the pre-committed order book. Panasonic disclosed that customers have already agreed to purchase approximately 80 percent of the output needed to reach its 2029 revenue target. Translated into supply chain terms, that means the remaining 20 percent of Panasonic's scaled production is all that will be available on the open market for buyers who haven't already locked in long-term supply agreements. In a market where hyperscalers and major colo operators are buying years ahead of capacity deployment, that window is narrow and will almost certainly narrow further.

The automotive pivot is notable for several reasons beyond the raw numbers. Panasonic has been one of the primary battery cell suppliers for Tesla's Gigafactory Nevada. The company's deep expertise in high-density cylindrical lithium-ion production is being redirected toward data center applications — a sign of how significantly EV demand has moderated from its 2022–2023 peak while AI infrastructure demand has surged in the opposite direction. When a manufacturer of this scale reallocates automotive tooling, it is not making a tactical adjustment — it is recognizing a structural shift in where industrial battery demand is concentrating.

What AI Does to UPS Requirements

To understand why AI workloads hit the UPS market so hard, it helps to understand what has changed at the workload level. Traditional enterprise computing is inherently tolerant of graceful degradation. A database query can wait; a virtualized workload can migrate; a stateless web server can restart. The business impact of a brief power interruption is meaningful but recoverable.

Large-scale AI training is categorically different. A distributed training run across hundreds or thousands of GPUs represents a tightly synchronized computational state. An unclean shutdown — even for fractions of a second — can corrupt model checkpoints and require costly rollbacks to the last clean save point. For runs that take days or weeks and cost millions of dollars in compute time, that risk profile demands power protection systems that can hold the entire cluster at full load without interruption for the duration of generator startup and transfer — typically between 30 seconds and several minutes depending on facility design.

Panasonic's UPS systems are specifically designed to cover this gap: rack-mounted battery enclosures that sit among servers and can also serve a secondary function of storing grid energy and releasing it during peak pricing windows, helping data centers manage electricity costs alongside providing backup protection. This dual-function value proposition is increasingly attractive to hyperscalers who are operating under intense pressure to reduce both infrastructure cost and power price exposure.

The scale of battery demand compounds quickly at AI compute densities. A single 40-rack Vera Rubin POD drawing roughly 4.8 megawatts in aggregate requires battery banks of corresponding scale. A hyperscale campus deploying dozens of such clusters needs UPS capacity measured in tens of megawatts — orders of magnitude larger than the enterprise UPS deployments that have historically defined the market Panasonic is now racing to serve.

Supercapacitors: The Fast-Response Complement

One of the more technically interesting disclosures in Panasonic's announcement is its concurrent investment in supercapacitor technology. Panasonic says it will use supercapacitors to "absorb fluctuations in power load" and plans to begin shipping them from its factories during fiscal year 2027 — two years before the full battery ramp reaches scale.

Supercapacitors occupy a distinct niche in the power protection stack. Unlike batteries, which store energy through electrochemical reactions, supercapacitors store charge electrostatically, allowing them to charge and discharge far faster with far higher cycle durability — potentially hundreds of thousands of cycles without significant degradation. They are optimal for handling the sub-millisecond power spikes and micro-interruptions that occur when utility power wavers and before battery systems fully engage.

In a conventional UPS architecture, the battery is asked to perform two very different functions simultaneously: respond to sub-millisecond power transients at the moment of an outage, and then sustain continuous high-current output for several minutes while generators start. No single battery chemistry is perfectly optimized for both. Supercapacitors are excellent at the first task and batteries at the second — a hybrid architecture that Panasonic's FY2027 supercapacitor roadmap suggests will start reaching commercial data center deployments at meaningful scale within the next two years.

For data center operators designing new facilities around AI-native architecture, this creates an opportunity to specify hybrid power protection systems from scratch rather than retrofit them. The capital justification — protecting GPU clusters and training infrastructure worth hundreds of millions of dollars against microsecond power events — is straightforward.

Who Gets Left Behind

The 80% pre-commitment figure from Panasonic's announcement tells a clear structural story: the operators who already have long-term battery supply relationships have secured the majority of scaled production. For those who don't, the available supply pool is significantly constrained even before Panasonic's production expansion fully comes online.

This dynamic structurally favors large, well-capitalized hyperscalers — Amazon, Microsoft, Google, Meta — who have procurement teams with the operational sophistication and balance sheet leverage to place multi-year forward orders years in advance. The Uptime Institute has documented that hyperscalers are already hoarding grid power "just in case," and the same behavior is extending to every component in the UPS supply stack.

The gap is most acute for everyone below that tier. Sovereign AI data center programs, regional colocation operators, enterprise organizations building private AI infrastructure, and emerging market data center developers are all competing for the remaining 20 percent of production from a market leader that is simultaneously trying to quadruple its output. This mirrors exactly what happened in the GPU allocation market, where enterprise buyers found themselves at the back of the queue after hyperscalers had absorbed the majority of each generation's initial production for 12–18 months.

The UPS battery shortage attracts less attention than the GPU shortage because batteries lack the cultural cachet of AI accelerators in the technology press. But for a data center that cannot open its doors because its power protection system is still on order, the operational impact is identical: the facility cannot operate. Given that the grid interconnection queue is already adding 12–36 months to project timelines, stacking battery procurement delays on top can push AI infrastructure projects well past their target opening dates.

The Bigger Pattern

Panasonic's announcement is a useful case study in how AI infrastructure demand is propagating through industrial supply chains far removed from the obvious compute layers. The GPU shortage was visible and widely discussed. The HBM memory shortage surfaced publicly later but eventually became front-page infrastructure news. The power plant and grid constraint story has now fully entered the mainstream. Battery supply is following the same pattern — clearly visible to the engineers designing data center power systems, but not yet prominent in the broader discourse about AI infrastructure constraints.

What makes the Panasonic disclosure unusual is its specificity and scale. A ¥800 billion revenue target with an 80% pre-committed order book, a production tripling plan involving the retooling of automotive manufacturing lines, and a separate supercapacitor product line targeting FY2027 — this is not a manufacturer hedging against a possible demand upturn. It is a major industrial company making a decisive bet that AI infrastructure will sustain extraordinary battery demand for the rest of the decade, and positioning its entire production footprint around that thesis.

The structural response — manufacturers pivoting from automotive to compute, hybrid supercapacitor architectures entering the roadmap, procurement teams shifting to multi-year forward commitments — is rational and will eventually close the gap. But resolution in capital-intensive industrial sectors takes time. In the interim, AI data center timelines will continue to slip for reasons that have nothing to do with software, algorithms, or even the chips everyone is watching. The AI infrastructure race isn't just about who can buy the most GPUs. It's about who can assemble all the pieces — power, cooling, memory, and now batteries — into a functioning facility before the competition does. Right now, one of those pieces is running years behind schedule, and most of the industry isn't talking about it.