Quantum computing headlines still obsess over who has the biggest qubit number. But the real contest just shifted to a harder metric: how efficiently each company converts noisy physical qubits into reliable logical ones. In the last cycle, Microsoft’s Majorana 1 reveal, Amazon’s Ocelot launch, and IBM’s Loon milestone all made essentially the same argument: practical quantum computing will be won by error-correction economics, not raw transistor-style scaling.

The breaking-news signal: three different stacks, one shared bottleneck

Within months of each other, the three hyperscaler-scale players reframed their roadmaps around one technical reality: qubits are fragile, and error correction is the cost center that determines whether useful machines are plausible at all.

Microsoft said its Majorana 1 program is designed around hardware that is potentially less error-prone, backing the announcement with a Nature-linked research claim and arguing useful systems are “years, not decades” away. Amazon, meanwhile, unveiled Ocelot and explicitly tied its value proposition to reducing physical-qubit overhead for correction. IBM’s Loon and Nighthawk disclosures made the same point from a different architecture, emphasizing error-corrected pathways and a staged timeline toward quantum advantage.

This is the important market update: the industry has stopped pretending that “more qubits” is a sufficient progress metric. The new headline number is becoming logical qubits per dollar, per watt, with predictable error rates.

Why the old qubit-count narrative is failing

In classical semiconductors, transistor count roughly tracked capability for decades. Quantum hardware does not grant that convenience. Adding physical qubits can increase computational capacity, but it can also increase control complexity, calibration burden, and aggregate failure probability. If error rates do not fall fast enough, scaling can make systems worse, not better.

Google’s Willow update underscored this directly: the company highlighted that scaling accompanied by error suppression is the key threshold event, not just a larger device footprint. In Google’s own benchmark framing, Willow demonstrated lower error rates across larger encoded arrays and reported a “below threshold” quantum error-correction result as the critical scientific milestone.

The deeper implication is financial. If a platform needs roughly a million physical qubits to deliver enough stable logical qubits for practical workloads, capex and operating overhead become severe. If a platform can achieve similar logical output with one-tenth the physical footprint, deployment timelines and business models shift materially.

Microsoft, Amazon, and IBM are making different technical bets

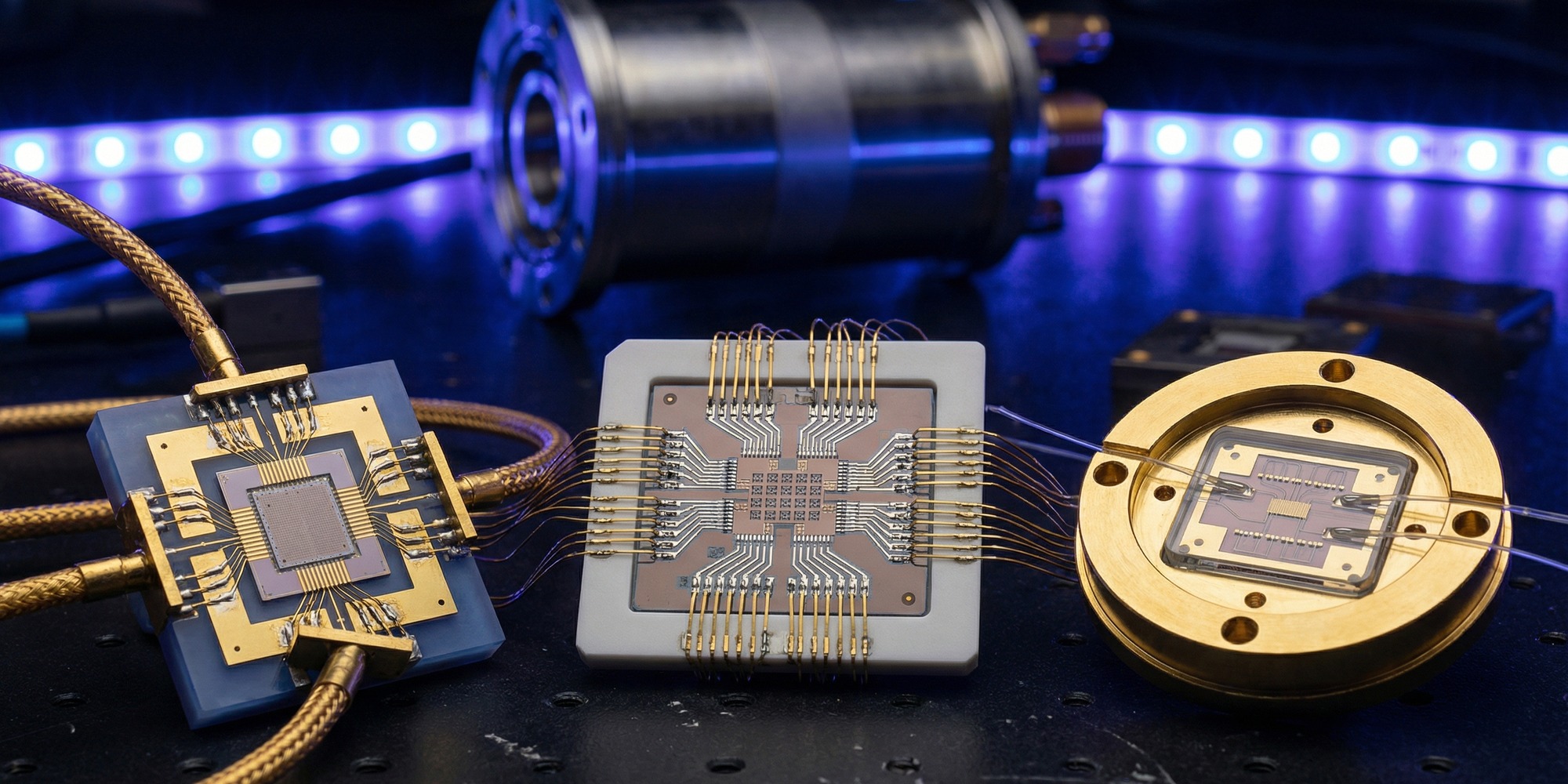

Microsoft’s thesis is that topological-style qubits can reduce fragility at the hardware layer. The company’s Reuters briefing described Majorana 1 as lower-error by design, built from indium arsenide and aluminum structures, and positioned as a long-horizon but potentially high-leverage approach after nearly two decades of work on Majorana physics.

Amazon’s thesis is a more immediate engineering tradeoff: use cat-qubit methods and manufacturing-compatible processes to improve correction efficiency. AWS told Reuters that Ocelot’s architecture could eventually cut physical-qubit requirements by a factor of five to ten, targeting useful machines at far lower qubit counts than legacy assumptions.

IBM’s thesis is systems integration: combine quantum and classical resources with progressively more correction-aware chips and runtime layers, then push toward demonstrable advantage in narrow tasks before broad commercial utility. IBM’s long-term roadmap language around quantum-centric supercomputing has consistently paired device progress with orchestration infrastructure rather than chip metrics alone.

These are different technological philosophies, but they converge on one reality: the winner is whoever industrializes error correction first, not whoever ships the most photogenic prototype.

The policy and security clock is already ticking

Even if large-scale fault-tolerant systems are not here tomorrow, cybersecurity policy has already moved. NIST finalized its first post-quantum cryptography standards in 2024 and urged organizations to begin migration immediately, warning that transition lead times are substantial. That guidance reflects a simple strategic concern: encrypted data harvested now can be decrypted later when capable machines arrive.

So there are two parallel timelines. Timeline one is commercial utility — chemistry simulation, materials design, optimization. Timeline two is cryptographic risk — where partial uncertainty about future capability is enough to justify immediate migration. The second timeline is already active, independent of whether any one vendor’s roadmap lands on schedule.

How to read quantum claims without getting fooled

For operators, investors, and policymakers, three filters matter more than announcement theater:

1) Error-correction overhead disclosure. Does the vendor provide clear physical-to-logical qubit assumptions, or only benchmark claims?

2) Reproducibility and external validation. Are milestone claims anchored in peer-reviewed work, community testing, or third-party replication pathways?

3) System-level roadmap realism. Is there a credible plan for control electronics, runtime software, fabrication yield, and long-duration operation — not just a lab demo?

On those criteria, 2025’s quantum cycle looked like progress, but also like a maturity test. Vendors are increasingly being forced to publish assumptions they previously left implicit.

Bottom line: quantum has entered its economic phase

The breaking-news takeaway from the last year is not that one company has “won” quantum. It is that the narrative has moved from scientific possibility to engineering economics. Error correction has become the master variable that links physics, manufacturing, software, and cost.

That is exactly what mature platform races look like right before commercial inflection points: fewer moonshot slogans, more uncomfortable math. The teams that can publish believable correction curves, maintain fabrication discipline, and expose testable system performance will set the pace for the next decade of quantum infrastructure.

In practical terms, quantum is no longer a simple bet on who can build the biggest chip. It is a bet on who can make reliability scale faster than complexity.

Related TTN coverage: Google's Ironwood TPU Goes Mass Scale in 2026, China's Silicon Sovereignty Drive Hits 41%, and Intel's Big Battlemage Is Here.