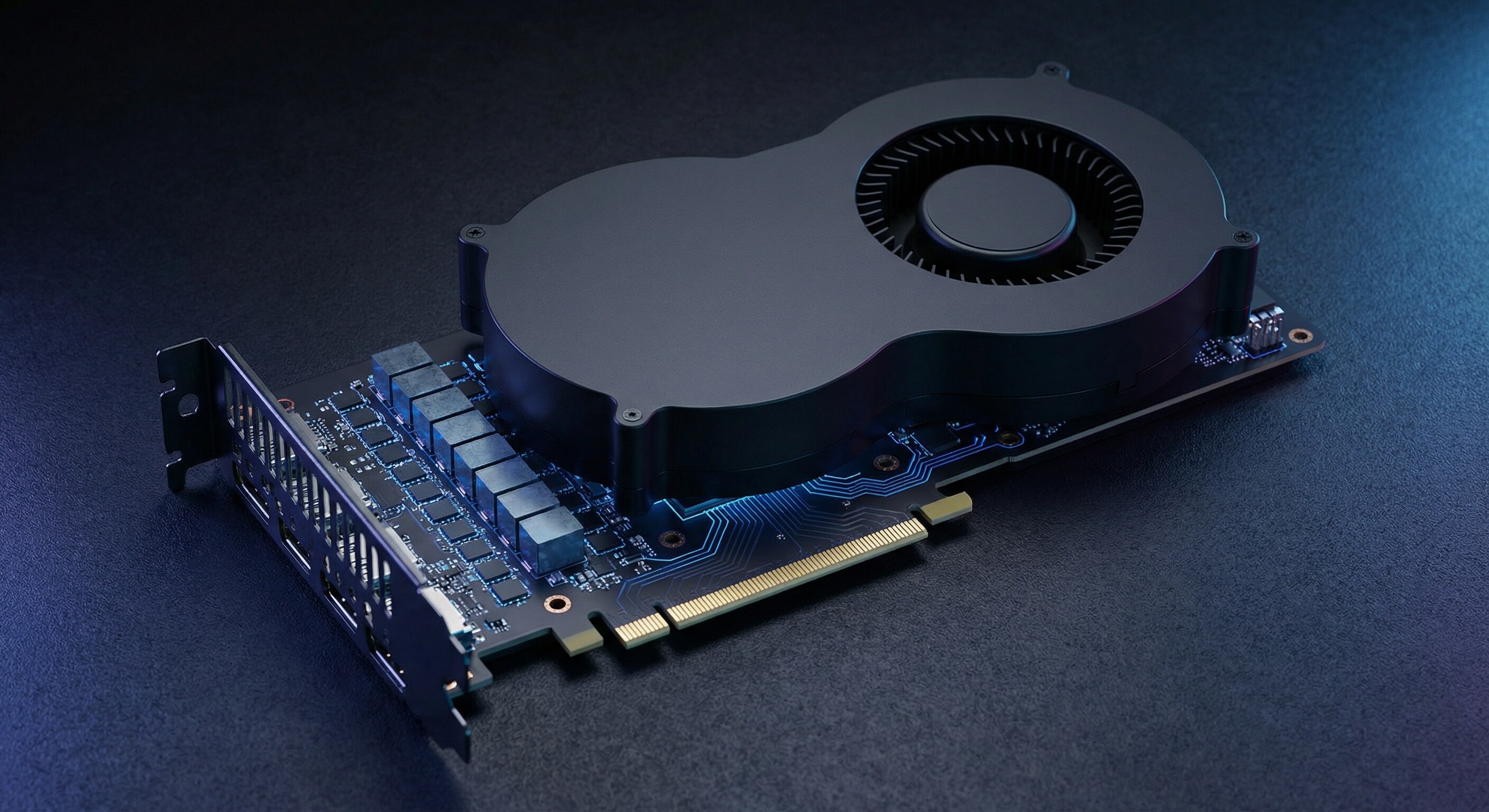

Intel doesn't do things quietly anymore. Two days ago, the company launched the Arc Pro B70 — its new flagship "Big Battlemage" professional GPU — with 32 gigabytes of VRAM, up to 32 Xe2 compute cores, and a reference price of $949. This is an AI-only card: Intel is explicitly not positioning it for gaming. The entire pitch is inference workloads, enterprise deployment, and private AI — and the company is backing the hardware with MLPerf-validated benchmark data that claims a meaningful performance-per-dollar advantage over NVIDIA. The question isn't whether Intel can build a competitive AI GPU anymore. The question is whether it can finally get customers to buy one.

The Hardware: Xe2, 32GB, and a Two-Card Lineup

The Arc Pro B70 sits at the top of Intel's new B-series professional GPU lineup. It carries up to 32 Xe2 compute cores and a full 32 gigabytes of VRAM — a memory configuration that positions it well above the consumer GPU tier and in direct conversation with professional AI inference hardware from NVIDIA and AMD.

Below it in the lineup is the Arc Pro B65, which ships with 20 Xe2 cores and a smaller memory footprint. The B65 is partner-only — meaning it won't be available as an Intel reference design but instead ships through system integrators building preconfigured inference workstations. The B70 reference card starts at $949, with partner-designed variants expected at varying price points.

The Xe2 architecture that underpins both cards represents a significant generational step for Intel's discrete GPU program. Earlier Arc generations — the Alchemist A-series — earned a reputation for driver instability and inconsistent performance in the first years after launch. Xe2 is the architecture Intel used in Lunar Lake client processors, where it performed competitively in independent benchmarks and demonstrated substantially improved software maturity. The B-series discrete cards extend that foundation to the workstation and inference market.

Intel is also emphasizing that the Arc Pro B70 is built from the ground up for AI inference rather than adapted from a gaming architecture. The card supports PCIe peer-to-peer data transfers between multiple GPUs, enabling multi-card inference configurations that can process larger models without memory bottlenecks. Intel's enterprise GPU driver stack includes ECC memory support, SR-IOV for virtualization, remote firmware update capabilities, and hardware telemetry — the kind of enterprise manageability features that matter to IT operations teams but never appeared in the gaming-first Arc A-series.

The Price Argument: $949 Versus Everyone Else

The $949 starting price is where Intel's value proposition becomes most legible. The professional AI inference hardware market has been extremely expensive. NVIDIA's H100 SXM5, the dominant data center training and inference GPU, runs between $25,000 and $40,000 depending on configuration. Even workstation-class options from NVIDIA carry significant premiums: the RTX PRO 4500 Blackwell Server Edition, which we covered earlier this month, delivers impressive compute density but at a price point well above Intel's new card. NVIDIA's RTX Pro 6000 — the flagship of that workstation-tier line — commands a significantly higher price for its 96GB VRAM configuration.

AMD's Radeon Pro W7900 offers 48GB of VRAM and competitive AI inference throughput, but its price tag has historically clustered around $3,999 at launch — still nearly four times what Intel is asking for the B70.

The gap between "what inference hardware costs from NVIDIA" and "what most enterprises can practically budget per workstation" has been a persistent barrier to on-premise AI deployment. Organizations that cannot justify cloud spend for inference — hospitals worried about patient data, financial institutions with regulatory constraints, defense contractors operating in air-gapped environments — have been squeezed between NVIDIA's premium pricing and the latency, compliance, and cost tradeoffs of cloud inference. Intel's $949 price point directly targets that market segment.

"Until now, limited options existed for professionals who prioritized platforms capable of delivering high inference performance without compromising data privacy or incurring heavy subscription costs tied to proprietary AI models," Intel said in its announcement on the Intel Newsroom.

MLPerf Numbers: What the Benchmarks Actually Show

Intel submitted results for the MLPerf Inference v5.1 benchmark suite released in September 2025 — the industry-standard benchmark for AI inference performance. The results featured the Arc Pro B60 (the previous-generation workstation card in a four-GPU inference workstation configuration, code-named Project Battlematrix) paired with an Intel Xeon w7-2475x processor.

The headline claims: in the Llama 3.1 8B benchmark, Intel's Arc Pro B60 configuration delivered up to 1.25x better performance per dollar than a comparable NVIDIA RTX Pro 6000 system, and up to 4x better performance per dollar than an NVIDIA L40S configuration.

Those numbers require context. The L40S is primarily a rendering and visualization card that handles inference workloads — it is not optimized for pure LLM inference the way Intel's setup was. The RTX Pro 6000 comparison is a more apples-to-apples measure, and the 1.25x performance-per-dollar advantage, while meaningful, is not a blowout. What the data does establish is that Intel's combined hardware-plus-software platform is competitive on LLM inference at the workstation tier — a claim that would have been implausible 18 months ago.

Crucially, the MLPerf v5.1 results used the B60. The B70, with its higher core count and larger VRAM, is Intel's more powerful GPU. Benchmark data for the B70 specifically has not yet been published to MLCommons at time of writing, but Intel is positioning the B70 as its flagship offering within the same Project Battlematrix inference workstation ecosystem.

Intel also noted that Xeon 6 with P-cores achieved a 1.9x performance improvement generation-over-generation in MLPerf Inference v5.1. Intel remains the only silicon vendor submitting both server CPU and GPU results to the benchmark suite, which the company frames as evidence of its commitment to the complete inference stack rather than just the accelerator.

Project Battlematrix: Intel's Full-Stack Inference Play

The Arc Pro B-series hardware is the visible tip of a deeper infrastructure play Intel is calling Project Battlematrix. Rather than selling a GPU and expecting customers to figure out the software stack, Intel is offering preconfigured inference workstations that combine Arc Pro GPUs with Intel Xeon CPUs, containerized software environments, and a validated software deployment framework.

The Battlematrix system is built around a containerized solution for Linux environments. It supports multi-GPU scaling with PCIe peer-to-peer data transfers, enabling four-card configurations that allow models too large for a single card's VRAM to be split across multiple GPUs without going through system memory. Enterprise reliability features — ECC, SR-IOV virtualization, hardware telemetry, remote firmware updates — are built in rather than bolted on.

"The MLPerf v5.1 results are a powerful validation of Intel's GPU and AI strategy," said Lisa Pearce, Intel corporate vice president and general manager of Software, GPU and NPU IP Group. "Our Arc Pro B-Series GPUs with a new inference-optimized software stack let developers and enterprises develop and deploy AI powered applications with inference workstations that are powerful, simple to set up, accessibly priced, and scalable."

The full-stack approach matters because inference workload deployment has historically been an integration problem as much as a hardware problem. The friction of setting up GPU-accelerated inference environments — driver installation, framework compatibility, model serving configuration — has been a significant barrier for organizations without dedicated ML infrastructure teams. Intel's containerized approach is designed to reduce that friction, making deployment accessible to enterprise IT generalists rather than only ML engineers.

The CUDA Problem Hasn't Gone Away

Intel's hardware case is now credible. The software challenge is harder. NVIDIA's CUDA ecosystem — the development platform that most AI frameworks, libraries, and tools are natively optimized for — represents a decade of accumulated investment by developers, research labs, and enterprises. It is not a standard that competitors simply unseat by shipping better hardware.

We covered this dynamic in detail in our analysis of AMD's MI300X memory advantage and the CUDA moat: AMD had, at the time, a clear hardware edge in inference-per-watt on memory-bound workloads, yet CUDA continued to command developer and enterprise mindshare. Intel faces the same structural challenge, and starting from a smaller installed base than AMD.

Intel's answer is OpenVINO — its inference optimization toolkit — and broader integration with the oneAPI development platform, which targets hardware portability across Intel CPUs, GPUs, and NPUs. The software stack has matured considerably and now covers all major LLM frameworks, including PyTorch, ONNX Runtime, and Hugging Face's ecosystem. Intel has been investing heavily in ensuring that popular models run without requiring custom code on Arc Pro hardware.

The argument Intel is making is not "switch your training workloads from CUDA to OpenVINO." It is narrower and more pragmatic: for inference in enterprise workstations, where the primary workloads are running already-trained models in production, the CUDA lock-in is much weaker. Most production inference deployments use optimized serving frameworks that abstract away the underlying accelerator. The enterprise doesn't need CUDA expertise — it needs a validated container that runs Llama 3 at acceptable latency. Intel's Project Battlematrix is designed to be exactly that.

The On-Premise AI Moment

The B70 launch arrives at a moment when on-premise AI inference is gaining strategic importance. Data sovereignty laws in the EU, HIPAA compliance requirements in healthcare, and classification constraints in defense and intelligence create real demand for inference hardware that never sends data to cloud providers. The Trump administration's AI executive orders have also increased enterprise attention to supply chain provenance and infrastructure sovereignty.

For these use cases, cost is not the primary objection — it's vendor lock-in, data residency, and operational control. An enterprise running sensitive inference workloads on-premise doesn't need to beat NVIDIA's cloud economics. It needs hardware that can run production LLMs reliably, at scale, within the walls of its own data center or workstation lab. Intel, with its established enterprise relationships, broad x86 server ecosystem, and now a credible AI workstation GPU, is positioned to capture that market in a way that a startup or a cloud-first company cannot.

The Xeon angle reinforces this. Enterprises running Intel Xeon servers already have existing vendor relationships, support contracts, and procurement infrastructure with Intel. Adding Arc Pro GPUs to an all-Intel workstation or edge inference node requires less organizational friction than standing up an NVIDIA or AMD system from scratch. Intel is explicitly marketing the B-series as part of an all-Intel inference platform, and for enterprise procurement teams, familiarity and single-vendor support are real decision factors.

The Competitive Picture in 2026

The AI hardware market Intel is targeting is more contested than at any point in the past three years. NVIDIA's Blackwell architecture dominates data center training and high-end inference, but its workstation-tier strategy is experiencing its own competitive pressure. NVIDIA's RTX Pro 6000 — 96GB VRAM, Blackwell architecture — is a powerful card, but its price point leaves significant market space below it. Arm's new AGI CPU architecture is creating a new compute category for inference-heavy agentic workloads. And at the memory layer, thermal and packaging innovations are enabling higher sustained compute density across the board.

In this context, Intel's B70 launch is notable not as a definitive market shift but as a meaningful data point in a widening competitive landscape. NVIDIA's dominance in AI inference at the data center tier is secure. But the workstation and enterprise edge inference market — sub-$1,500 cards, on-premise deployments, privacy-sensitive workloads — is a market that NVIDIA has historically not optimized for, and where Intel's combination of pricing, enterprise relationships, and full-stack deployment tooling gives it a genuine opening.

Whether Intel can execute on that opening — shipping product in volume, delivering stable drivers, building out the partner ecosystem for Battlematrix systems, and giving developers a reason to target Arc Pro — will determine whether the B70 is a meaningful competitive stake or another interesting announcement that doesn't translate to market share.

What Comes Next

Intel has not published a detailed roadmap for the Arc Pro line beyond the B-series. The Xe2 architecture is expected to evolve into Xe3 ("Celestial") in future generations, and Intel has indicated continued investment in its discrete GPU program despite the organizational turbulence that characterized the Pat Gelsinger era's end and the company's subsequent restructuring.

For enterprise buyers evaluating inference workstation options right now, the B70 represents the strongest AI hardware case Intel has assembled: competitive silicon, validated benchmarks, a full-stack deployment solution, and — for the first time — a price point that makes the comparison to NVIDIA genuinely interesting rather than merely aspirational. The CUDA moat is real, the software ecosystem gap is real, and Intel has a long way to go in building developer trust after the Alchemist generation's rough start.

But $949 for 32GB VRAM with MLPerf-validated Llama inference performance is a card that belongs in the conversation. Two years ago, that sentence would have been surprising. Today, it's just a product launch. The real test begins at the workbench — and in enterprise procurement decisions over the next few quarters.