The AI industry has spent billions solving the compute problem. Now it's confronting a harder one: heat. As GPU power densities push past 1,000 watts per chip and data center racks routinely exceed 100 kilowatts, thermal management has become the quiet constraint on AI performance — and one company thinks the answer was sitting in your jewelry box the whole time. Akash Systems has commercialized synthetic diamond as a chip-level cooling substrate, deploying it across AMD and Nvidia GPU platforms with a reported $300 million launch order and roots in NASA and DARPA space programs. If the technology scales as promised, it could redefine how the industry thinks about heat — and who controls the performance ceiling.

Why Heat Has Become AI's Hardest Bottleneck

For most of computing history, thermal management was a second-order concern. You sized your cooling plant, added fans, maybe installed liquid loops, and moved on. The chip ran at its rated TDP. The data center maintained acceptable inlet temperatures. Everything worked.

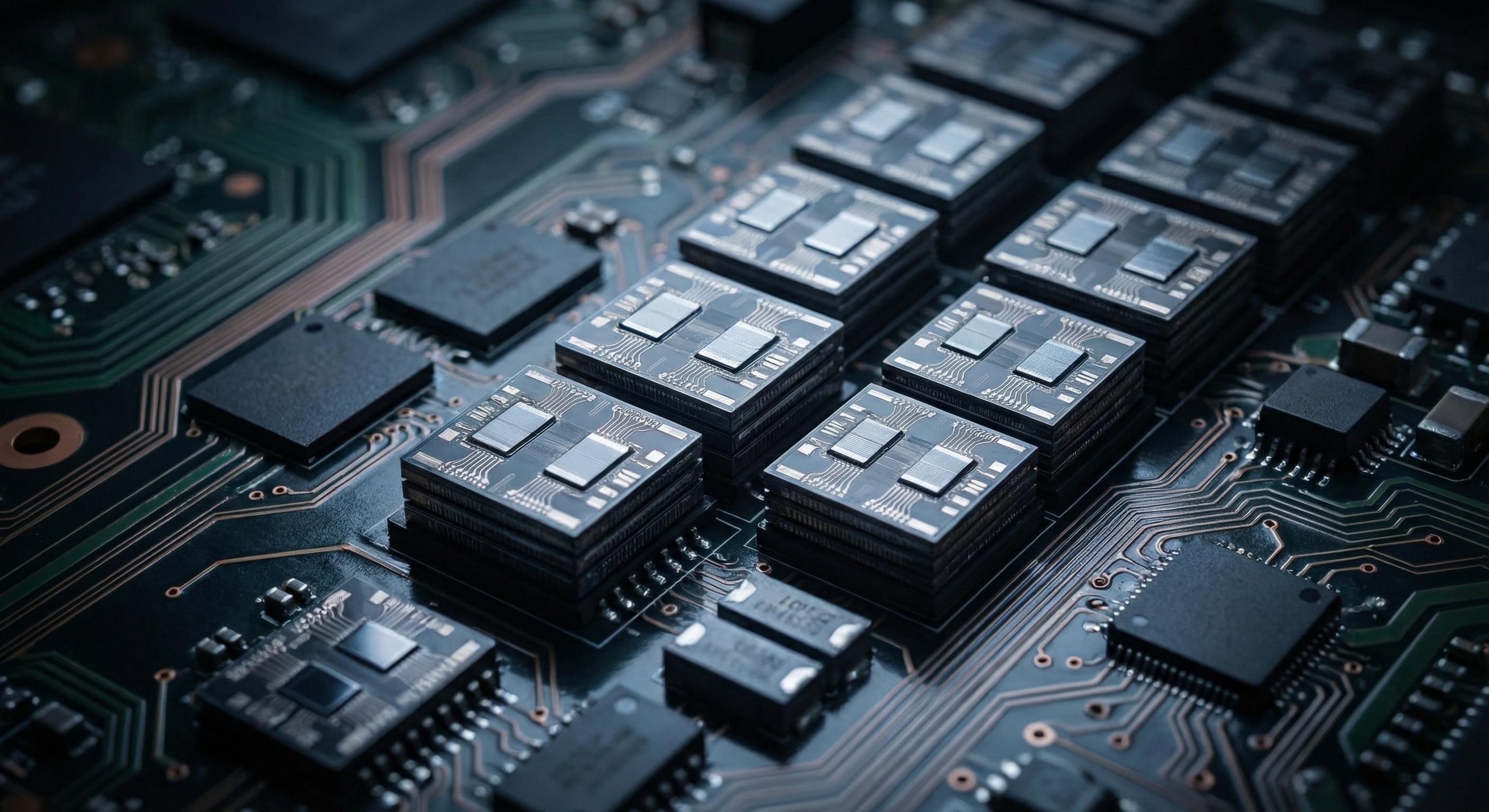

AI changed the equation fundamentally. Training large language models and running high-volume inference workloads demand sustained, high-utilization compute — not the bursty, average-load patterns that traditional data center designs optimized for. An Nvidia H100 SXM5 runs at up to 700 watts under continuous load. The Blackwell B200 pushes past that threshold. Next-generation Rubin-class GPUs are expected to cross 1,000 watts per chip.

The consequence is rack densities that would have been unimaginable five years ago. Modern AI deployments routinely exceed 100 kilowatts per rack. High-performance configurations push toward 200 kW and beyond. The laws of thermodynamics don't negotiate: that heat has to go somewhere, and getting it out fast enough to keep chips running at peak performance is now one of the hardest engineering challenges in the industry.

The standard response has been a dash toward liquid cooling and immersion systems. Both approaches work — but they require significant capital investment, major facility retrofits, and in many cases, entirely new building designs. The world's installed base of roughly 12,000 data centers cannot all be rebuilt on a three-year timeline. A different approach was needed: one that removes heat before it even reaches the cooling infrastructure.

The Diamond Advantage: Five Times the Conductivity of Copper

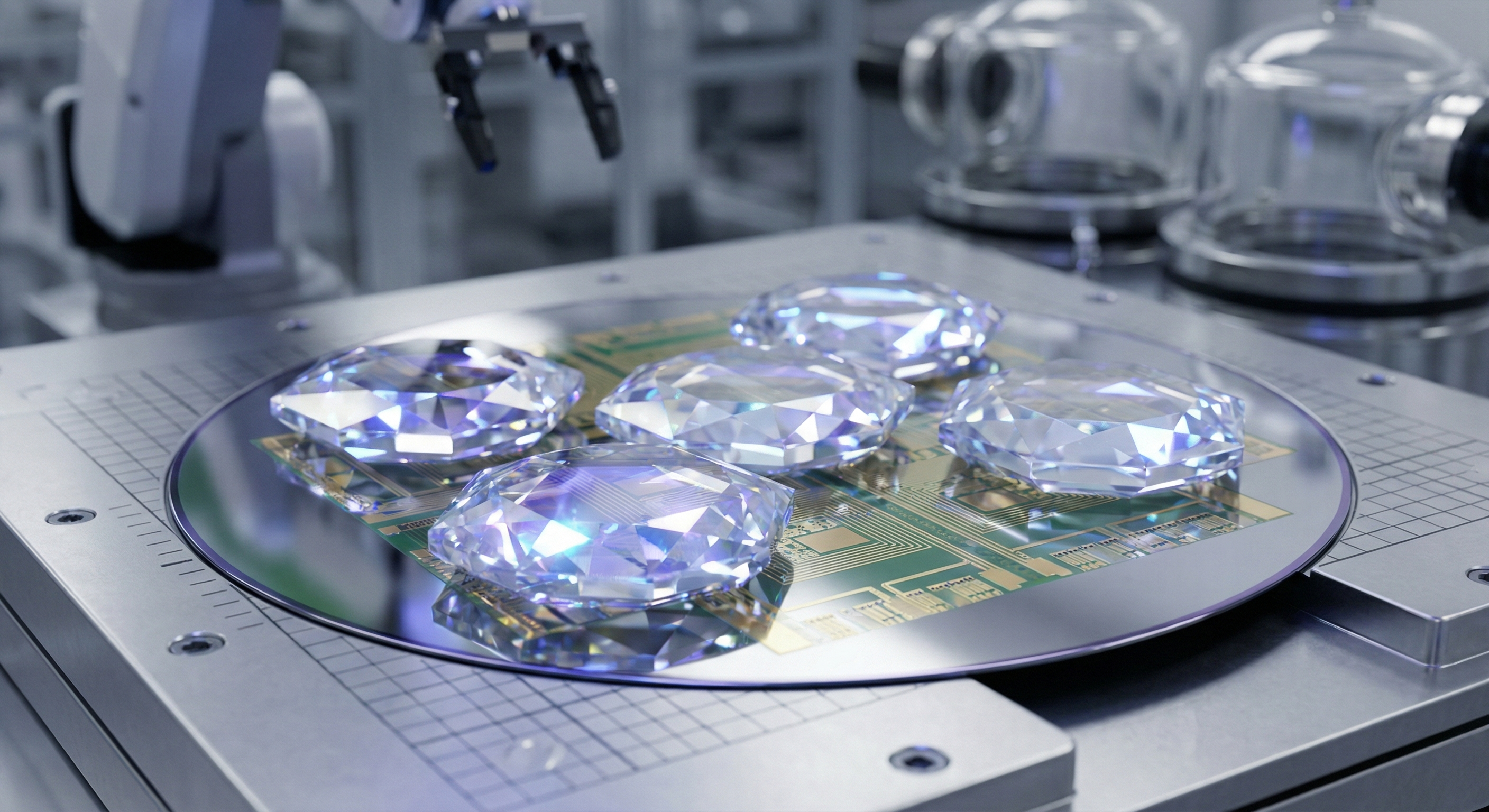

Akash Systems' answer is synthetic diamond used as a chip-level thermal interface material — positioned directly between the GPU die and its heat spreader, removing heat at the source rather than trying to manage it downstream.

The physics are compelling. Diamond is the most thermally conductive material known, with a thermal conductivity roughly five times that of copper — the current industry standard for heat spreaders and thermal interface materials. That means heat generated at the transistor level moves away from the junction significantly faster, keeping die temperatures lower under sustained workloads.

In real-world deployments, Akash reports a 10°C reduction in GPU temperatures under sustained load. That number matters more than it sounds. Lower junction temperatures allow chips to run at their rated boost clocks for longer without hitting thermal throttling limits. Performance improves. Energy efficiency improves — because the chip achieves more work per watt when it isn't throttling. And critically, the downstream cooling infrastructure has to work less hard, reducing both power consumption and mechanical wear.

"Compute performance is increasingly constrained by how effectively you can manage heat," said Pamit Surana, co-founder and chief commercial officer at Akash Systems. "In many ways, we're already at a point where thermal management is as important as the compute itself."

From Space to Silicon: NASA and DARPA Origins

Akash Systems didn't develop diamond cooling for AI data centers. It developed it for space.

The company's thermal technology was originally engineered in collaboration with NASA and DARPA to handle the extreme thermal environments of spacecraft and military satellite payloads — systems that must operate without convective air cooling, in temperature swings from cryogenic cold to direct solar radiation, with zero tolerance for component failure.

Those constraints created demanding requirements that consumer-grade thermal materials couldn't meet. Diamond, with its combination of thermal conductivity, electrical insulation, and mechanical hardness, was the only material that could reliably handle the job. Akash built manufacturing processes to produce synthetic diamond wafers at the precision required for semiconductor integration — and those processes, once proven in space, translated directly to the thermal challenges of terrestrial AI infrastructure.

The progression from orbital hardware to data center GPUs reflects a pattern that has appeared repeatedly in AI infrastructure: technologies developed for extreme or defense applications finding new commercial relevance as AI workloads push civilian hardware toward limits previously only encountered in aerospace and military systems.

$300 Million Launch Order and Cross-Platform Deployment

The commercial momentum behind Akash's technology is now substantial. The company has announced that its diamond cooling is commercially available on AMD's Instinct MI350 series GPUs, backed by a reported $300 million launch order — a figure that reflects hyperscale-level commitment, not boutique experimentation.

Simultaneously, Akash says its technology has already been deployed on Nvidia H200 platforms, the current-generation 141-gigabyte HBM3e variant of the Hopper architecture that commands a premium in the market. Support for Blackwell-generation systems — Nvidia's next major GPU architecture now entering widespread deployment — is planned for future rollout.

The cross-platform coverage is strategically significant. Akash is not betting on a single chip vendor winning the AI accelerator market. It is positioning chip-level diamond cooling as a layer of infrastructure that sits beneath the GPU competition — required regardless of whether a given hyperscaler's rack runs AMD or Nvidia silicon.

That positioning mirrors how high-performance cooling companies have historically approached the data center market: as essential enablers rather than aligned with any single compute vendor. The $300 million launch order suggests at least one major buyer agrees.

Extending Air-Cooled Infrastructure Without a Rebuild

Perhaps the most commercially important aspect of Akash's technology is what it enables for the installed base of air-cooled data centers — facilities that cannot easily or affordably transition to liquid cooling on the timeline that AI hardware upgrades demand.

The AI hardware refresh cycle is now effectively annual. Nvidia's GPU roadmap — Hopper to Blackwell to Rubin — moves at a pace that procurement teams are struggling to keep up with. Each generation brings higher power envelopes. An air-cooled facility designed for 20 kW racks may face a serious engineering question when the next GPU generation targets 30, 40, or 60 kW.

Chip-level thermal improvements offer a partial answer: by reducing the heat that reaches facility-level cooling systems, diamond cooling can allow operators to deploy next-generation GPUs in existing infrastructure without requiring parallel investment in liquid cooling retrofits. "Not every facility can transition to liquid cooling easily," Surana told Data Center Knowledge. "Our technology allows operators to upgrade to next-generation GPUs while staying within existing infrastructure constraints."

This isn't a complete solution for the highest-density deployments — a 200 kW AI rack will still need liquid cooling regardless of chip-level thermal improvements. But for the majority of the world's data centers operating at moderate AI densities, it represents a meaningful path to deploying AI workloads without stranded capital investment in cooling infrastructure.

The Broader Thermal Management Inflection

Akash is not operating in isolation. The thermal management industry is experiencing its own AI-driven transformation, with a wave of new approaches competing to define how heat gets managed in next-generation AI infrastructure.

At the facility level, hot water cooling and rear-door heat exchangers have moved from edge cases to mainstream deployments. Immersion cooling — submerging servers in dielectric fluid — is increasingly common in hyperscale and HPC environments. Direct liquid cooling, where coolant flows through cold plates directly attached to processors, has become standard in new data center construction from Nvidia's reference architectures onward.

Vertiv, the data center infrastructure specialist, has been acquiring thermal management companies to build out its AI cooling portfolio. The message from the supply chain is consistent: thermal management is no longer a commodity utility. It is a competitive differentiator, and the companies that solve it best will have an outsized role in determining where and how AI compute gets deployed.

Akash's contribution to this stack is specifically at the materials science layer — the point where heat originates. If liquid cooling is the data center's drain, diamond cooling is the sink installed under the tap. The two are complementary rather than competing, and the combination of chip-level and facility-level thermal innovation creates more headroom for AI hardware to operate at its rated performance envelope.

The Inference Shift and What It Means for Thermal Demands

The thermal problem is also evolving in a direction that favors chip-level solutions. The AI industry is in the midst of a transition from training-dominated workloads to inference-dominated ones. Training is computationally intense but bursty — a model gets trained once (or periodically fine-tuned), and the compute cluster can be redeployed between runs. Inference is different: it runs continuously, serving every user query in real time, with no opportunity for thermal recovery between bursts.

For chip-level cooling, the shift to inference is particularly significant. Training workloads stress the memory and interconnect subsystems; inference hammers the core compute logic at sustained utilization rates that can approach 100% for extended periods. "Instead of optimizing for peak bursts, you need systems that can run continuously at high utilization," Surana said. "That's where consistent thermal performance becomes critical."

The implication is that as AI moves from research to production deployment — from a few thousand training clusters to millions of inference servers — the thermal management challenge scales dramatically in both absolute terms and duration. Diamond's advantage is that it improves steady-state thermal performance, which is precisely what inference-heavy workloads need.

Manufacturability: The Remaining Question

The obvious concern with any diamond-based technology is cost and scale. Gemstone-grade diamond is not the material Akash is deploying — the company uses synthetic diamond engineered specifically for thermal applications, optimized for manufacturability rather than optical properties. That distinction allows production economics to improve with scale in ways that natural diamond cannot.

The $300 million launch order on AMD's MI350 suggests the cost curve has already crossed the threshold for commercial viability in high-end AI hardware. At hyperscale volumes, even modest per-chip premiums become significant aggregate costs, and buyers at that scale conduct thorough economic analysis before committing. The order size implies the math works — at least for the highest-performance tier of the market.

Whether diamond cooling can move down-market to mid-range AI inference hardware — the kind deployed in enterprise edge servers and regional inference clusters — depends on how quickly manufacturing costs fall as production volumes increase. Akash has not disclosed its per-chip pricing, but the trajectory of synthetic diamond manufacturing broadly suggests that the cost premium narrows significantly at scale.

The New Performance Variable

For years, AI hardware benchmarks focused almost exclusively on FLOPs per second, memory bandwidth, and interconnect speed. Thermal performance — measured in degrees Celsius of sustained junction temperature, or in the gap between rated TDP and real-world throttling thresholds — rarely made the spec sheet.

That is changing. As AI infrastructure vendors compete to deliver more compute in less space using less power, the ability to sustain peak performance under continuous load has become a primary competitive variable. A chip rated at 1,000 teraFLOPs that throttles to 700 teraFLOPs after 30 minutes of continuous inference is meaningfully less valuable than one that holds 950 teraFLOPs indefinitely. The difference is often thermal.

Akash Systems is betting that the AI industry has reached the point where materials science at the chip level is as important as transistor density or memory architecture. The $300 million in early orders suggests the market agrees. As racks push toward 200 kW, 300 kW, and beyond — and as inference workloads demand sustained performance from millions of deployed chips — the gap between what silicon can theoretically deliver and what facilities can thermally sustain will define the next frontier of AI hardware competition. Diamond, it turns out, may be more than just the hardest material on Earth. It may be the one the AI industry was waiting for.