Twelve months ago, running a trillion-parameter AI model required a data center contract and a tolerance for cloud egress fees. Today, NVIDIA wants to put that capability on your desk. The company's updated DGX Station, powered by the GB300 Grace Blackwell Ultra Desktop Superchip, delivers 748 gigabytes of coherent memory, 20 petaFLOPS of AI compute, and the ability to run models at the trillion-parameter scale — all in a deskside tower available to order now and shipping in the coming months. It is, without qualification, the most capable on-premises AI machine NVIDIA has ever sold. The question worth asking: has the math finally shifted in favor of owning your compute rather than renting it?

The GB300 Grace Blackwell Ultra: One Chip to Rule Them All

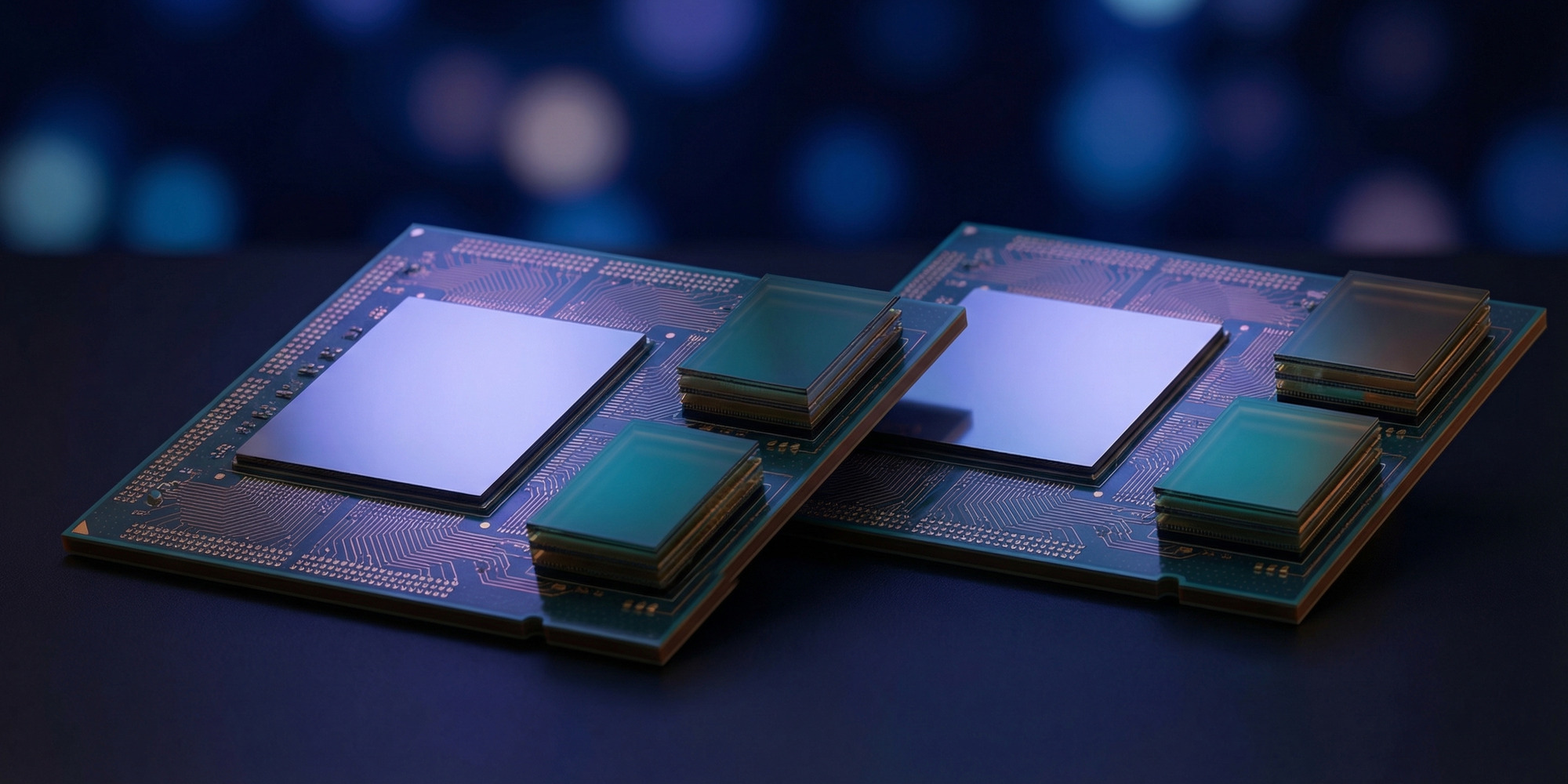

At the heart of the new DGX Station is the NVIDIA GB300 Grace Blackwell Ultra Desktop Superchip — a single package that fuses a high-performance NVIDIA Grace CPU with a Blackwell Ultra GPU via the NVIDIA NVLink-C2C chip-to-chip interconnect. Unlike traditional server configurations where CPU and GPU communicate over PCIe — a bottleneck that has long frustrated AI workloads — NVLink-C2C enables direct, high-bandwidth, low-latency data transfers between the two processors as if they shared a single memory space. In practice, this means the CPU can feed the GPU compute units with none of the transfer overhead that haunts conventional workstation designs.

The Blackwell Ultra GPU component of the superchip deploys fourth-generation Tensor Cores supporting NVFP4 (4-bit floating point) operations. FP4 inference dramatically increases the number of operations per second compared to FP16 or BF16, and critically, it allows the on-chip memory to accommodate far larger model slices before data must be staged or swapped. Combined with 748 GB of coherent unified memory — a pool visible and accessible to both the CPU and GPU simultaneously — the result is a machine that can load and run models at scales previously impossible outside a server rack.

NVIDIA confirms the DGX Station supports AI models up to 1 trillion parameters fully resident in memory, eliminating the need for model sharding across multiple devices for most deployment scenarios. That capability was reserved for HGX H100 and H200 server clusters less than 18 months ago.

Why 748 Gigabytes of Coherent Memory Is the Real Story

It is tempting to fixate on the 20 petaFLOPS headline — and it is legitimately staggering for a deskside system — but the memory architecture may matter more in the long run.

The AI industry has spent three years navigating a persistent memory wall. State-of-the-art models like GPT-4-class architectures require enormous amounts of fast, low-latency memory just to hold their weights, let alone run inference efficiently. The standard workaround — distributing model weights across multiple GPUs connected via NVLink or InfiniBand — works at scale but introduces latency, bandwidth bottlenecks, and significant software complexity. It also raises the floor cost of deployment: even renting enough cloud GPU capacity to run a large frontier model continuously costs tens of thousands of dollars per month.

The DGX Station's coherent memory pool eliminates the intra-node memory fragmentation problem entirely. A 405-billion-parameter model that would require four H100 80GB GPUs in a cloud deployment can run comfortably in the DGX Station's unified 748 GB pool. The model sees a single, contiguous memory space. Batch sizes increase. Inference latency drops. And for fine-tuning or continued pre-training workflows, the working set remains local, removing the need to stream data from object storage over a network link that always costs more than expected on cloud invoices.

NVIDIA also includes the ConnectX-8 SuperNIC, supporting up to 800 gigabits per second of network bandwidth and enabling two DGX Stations to be linked together — effectively doubling the available memory and compute for users running the most demanding multi-node workloads. A two-node DGX Station cluster would present 1.5 petabytes of coherent memory and 40 petaFLOPS of AI compute at deskside scale. That configuration does not yet exist in any competitor's portfolio.

On-Premises vs. Cloud: The Numbers Are Shifting

The DGX Station is not a cheap machine. NVIDIA has not announced official pricing for the GB300 configuration, but the H100-based DGX Station carried a list price of approximately $149,000, and the GB300 version with Blackwell Ultra will certainly command more. Partners including Dell, HP, and Lenovo are expected to offer their own configurations at varying price points once systems begin shipping.

At first glance, $150,000–200,000 for a single workstation sounds prohibitive. But the cloud cost comparison deserves scrutiny. Running a moderately large language model continuously on H100 instances from AWS or Azure costs roughly $10–15 per GPU-hour at on-demand pricing — and running a 70B-parameter model at production throughput typically requires 4–8 H100s, putting baseline costs at $40–120 per hour. A research team running continuous experiments across an 8-hour workday, five days a week, can easily accumulate $100,000 in cloud AI compute costs within 12–18 months — and that math does not account for egress fees, storage costs, or the soft cost of managing cloud infrastructure.

Against that benchmark, a three-year amortized DGX Station starts to look compelling — particularly for organizations with data sovereignty requirements, regulatory compliance obligations, or simply the preference to avoid perpetual subscription exposure. The machine is also preconfigured with an optimized Ubuntu environment, CUDA-X libraries, and NVIDIA AI Developer Tools, meaning it ships ready to run without the infrastructure engineering overhead that typically accompanies a self-managed GPU cluster build-out.

NemoClaw: The Security and Agentic AI Play

Perhaps the most strategically interesting new feature associated with the DGX Station launch is NemoClaw — NVIDIA's integration with the OpenClaw open-source agent platform that transforms the DGX Station into a secure, always-on AI agent host.

NVIDIA describes NemoClaw as adding "security and privacy to run secure, always-on AI assistants on NVIDIA RTX PCs, DGX Station, and DGX Spark." The integration allows enterprises to deploy persistent AI agents that operate entirely within their own hardware perimeter, with no training data, prompts, or inference outputs leaving the device. For organizations handling sensitive intellectual property, regulated clinical data, or classified information — categories that include a significant fraction of the enterprise AI market — the ability to run frontier-capable AI agents without exposing data to external APIs or cloud inference endpoints is a material capability advance.

Jensen Huang acknowledged the opportunity at CES 2026 when discussing the NVIDIA AI roadmap, noting that always-on, privacy-preserving agentic AI represents the next major deployment wave. NemoClaw positions the DGX Station not merely as a training accelerator or inference node, but as an enterprise AI appliance — a category that enterprise security teams and compliance officers can actually approve for production deployment.

Target Market and the Partner Ecosystem

NVIDIA's DGX Station has historically sold into a narrow but high-value market: AI research groups at universities, well-funded enterprise data science teams, government laboratories, and financial institutions running quantitative AI workflows. The GB300 configuration expands that addressable market meaningfully.

Three new deployment scenarios become viable at 748 GB coherent memory scale:

Medical and life sciences: Genomic foundation models, protein structure prediction workflows, and clinical decision support systems often require large context windows and high-memory inference capacity. Running these workloads on sovereign, on-premises hardware addresses the regulatory complexity of handling patient data in public cloud environments.

Legal and financial services: Law firms and financial institutions building retrieval-augmented generation systems over proprietary document stores have strong incentives to keep both the model weights and the document corpus entirely in-house. A trillion-parameter model that can process lengthy contracts or financial filings without data leaving the firm's network is a genuinely new capability at deskside scale.

Government and defense: Classified environments that cannot use commercial cloud infrastructure have historically been limited to whatever GPU hardware could be approved, procured, and installed. The DGX Station's compact, self-contained design simplifies the logistics of deploying frontier AI capability in air-gapped or restricted-access facilities.

NVIDIA's partner network — including Dell Technologies, HP, Lenovo, and Supermicro — will offer customized DGX Station configurations, potentially including ruggedized variants and custom cooling options for non-standard deployment environments.

The Competitive Landscape: Who Else Is in This Race?

The honest answer is that no direct competitor currently offers a deskside AI system at the GB300 DGX Station's memory and compute tier. But the competitive pressure from adjacent directions is intensifying.

Apple's recently launched M5 Ultra Mac Pro offers up to 192 GB of unified memory — impressive at the consumer and prosumer level, and genuinely useful for running 70B-class models locally, but still less than a quarter of the DGX Station's memory capacity. The M5 Ultra is priced aggressively (by Apple workstation standards) in the $10,000–16,000 range and trades raw AI throughput for energy efficiency and a polished developer experience. These machines serve different markets and different workloads.

AMD's Instinct-based workstation configurations, including systems built around the MI300X with its 192 GB of HBM3 memory, come closer to the DGX Station territory in terms of GPU compute throughput, but still fall short of the coherent unified memory pool that the Grace CPU + Blackwell Ultra NVLink-C2C architecture delivers. AMD's MI300X advantage in memory bandwidth is real, but the software ecosystem gap relative to CUDA remains the decisive factor for most enterprise buyers who do not have the engineering capacity to optimize for ROCm.

The most credible near-term challenger to NVIDIA's deskside AI dominance may be Arm's new AGI CPU platform, which announced aggressive specifications and enterprise partnerships earlier this month. But Arm is targeting hyperscale data center deployment first; deskside configurations for enterprise buyers remain a future roadmap item rather than a current shipping product.

The Road to Vera Rubin: Upgrade Cycle Considerations

Enterprise buyers considering the DGX Station investment will inevitably ask about upgrade cycles. NVIDIA's publicly disclosed roadmap shows the Vera Rubin platform — successor to Blackwell Ultra — delivering 50 petaFLOPS per GPU with NVLink 6 fabric and fundamentally different die architecture. At GTC 2026, Jensen Huang confirmed Vera Rubin production is underway, with data center deployments beginning later this year.

This creates the standard NVIDIA upgrade cycle dilemma. The GB300 DGX Station ships into what may be a 12–18 month window before Vera Rubin-based deskside systems arrive. For organizations with current workloads that genuinely saturate the GB300's capabilities today, the timing is straightforward: the machine solves real problems now. For buyers who are evaluating the platform speculatively — buying ahead of workloads that will grow into it — patience may be rewarded.

NVIDIA's historical DGX Station upgrade cadence has been roughly two years, aligning with major GPU architecture transitions. The GB300 station represents the Blackwell Ultra generation; the Vera Rubin deskside successor, likely designated the DGX Station VR or a similar naming convention, should arrive by late 2027. Organizations buying now should factor that into their depreciation models.

The Bottom Line

The NVIDIA DGX Station with the GB300 Grace Blackwell Ultra is the most significant on-premises AI compute announcement since the original DGX-1 launched in 2016 and set the template for AI workstation hardware. Where the DGX-1 put GPU compute in reach of research teams that previously depended on supercomputer allocations, the GB300 DGX Station puts trillion-parameter scale AI in reach of enterprise teams that currently depend on cloud GPU contracts.

The $150,000-plus price tag will remain prohibitive for most individual deployments. But for organizations running sustained AI workloads that justify the amortization, that face data sovereignty constraints that complicate cloud deployment, or that want to operate persistent agentic AI systems without external API exposure, the GB300 DGX Station delivers a genuinely new class of capability at a new price point. The cloud is not going away. But for serious AI development, the case for owning your compute has never been stronger.